1-Page Summary

Every man-made object, environment, or program in our world is designed. From doorknobs to smartphone apps, design pervades our lives to the point that it often becomes completely invisible. When we struggle with one of these designs, we assume that our difficulties are our own fault, or that we’re just not smart enough to figure it out. But that blame is misplaced. More often than not, the true culprit in cases of “human error” is actually bad design.

In The Design of Everyday Things (originally released in 1988 under the title The Psychology of Everyday Things and revised in 2013), cognitive psychologist and engineer Don Norman explores the ways people understand and interact with the physical environment (this is sometimes referred to as “user experience”). In doing so, he makes all of us smarter consumers and helps designers create products that work with users, rather than against them.

Interacting With Objects

At its core, design is any human influence on the physical world. This applies to everything from ancient architectural marvels to the layout of clothes in your closet.

When we interact with design, we’re guided by the principles of discoverability and understanding. Discoverability refers to whether a user can figure out what an object is and how to use it without considerable effort. Discoverability answers the question, “How do I use this thing?” Understanding, in this context, refers to the user’s ability to make meaning out of the discoverable features of the object. Understanding answers the questions, “What is this, and why do I want to use it in the first place?”

Focusing on these factors is a hallmark of human-centered design, which is a design philosophy that flips the traditional design process on its head by focusing on human needs and behaviors first and designing products to fit those needs, rather than designing a product and hoping that users figure out how to use it.

How Do We Know How to Use an Object?

To design for human needs, we need to understand how people interact with design. There are six design principles that influence how we interact with an object: affordances, signifiers, mapping, feedback, models, and the system image.

Affordances are the finite number of ways in which a user can possibly interact with a given object. They answer the question, “What is this thing for?” For example, chairs typically have a flat surface, which we intuitively recognize as an indicator of support. In other words, the look of a chair suggests that it is for sitting on.

Signifiers are signals that draw the user’s attention to an affordance they may not have intuitively discovered, like a “click here” button on a website or a “push” sign on a door. For designers, signifiers are more important than affordances: The most sophisticated technology is pretty useless if a user can’t find the “on” button.

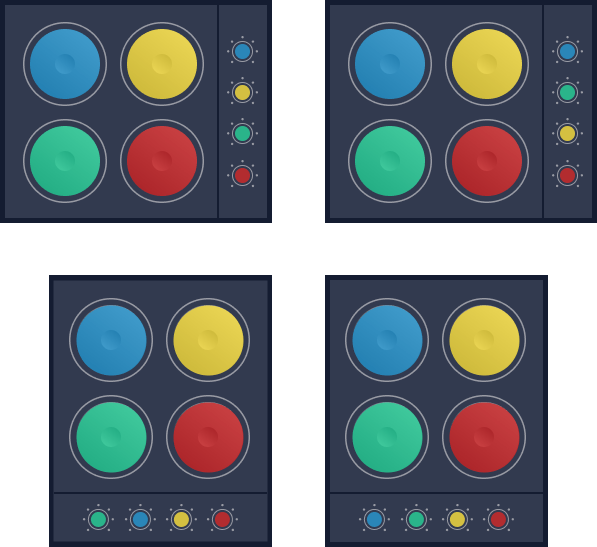

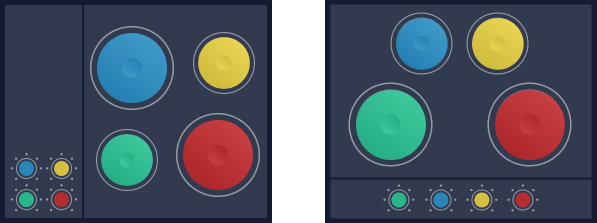

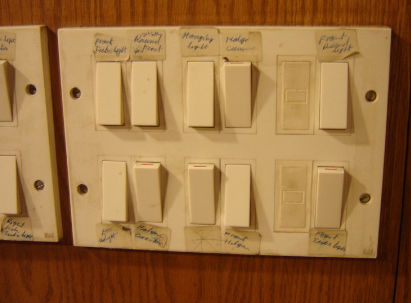

Mapping uses the position of two objects to communicate the relationship between them. For example, if you see a row of three lights and a panel of three switches, natural mapping would mean the position of the switch corresponds to the position of the light it controls. Mapping is not universal since culture can influence how we think about direction and spatial relationships.

Feedback is a sensory signal that alerts the user that what they’re doing to an object is having some effect. Feedback can tell us when something is working as expected, but more importantly, when it’s not working how we want. In a car, a dashboard alert light or the sound of squeaking brakes are both sources of feedback that let us know something is wrong.

Models (also called conceptual models or mental models) are mental images of an object and how it works based on affordances, signifiers, mapping, and feedback. Mental models stem from the universal instinct to organize information into cohesive stories. But these stories are not always accurate, and false mental models of a design can cause confusion.

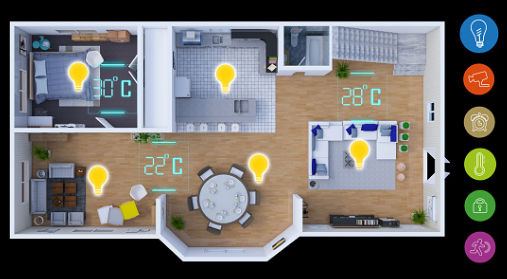

- For example, many people have an inaccurate model of their home thermostat. They assume the thermostat controls a valve that opens a certain amount based on the setting, and that setting it higher will warm the room faster. In reality, most thermostats are a simple on/off switch, so setting a higher temperature has no effect on how fast the room warms up.

The System Image is the sum total of the information we have about an object, including both its physical properties and information from user manuals, product websites, or past experience. The system image is the only way designers can communicate their model of how something works to the user.

Cognition, Emotion, and Behavior

The way we think clearly influences how we interact with objects, but designers often underestimate the role of psychology in user interaction.

The Seven Stages of Action

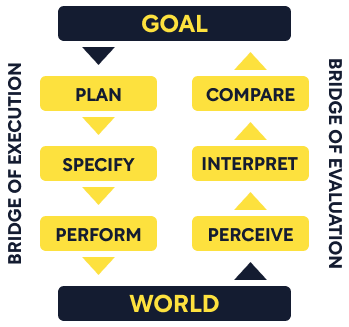

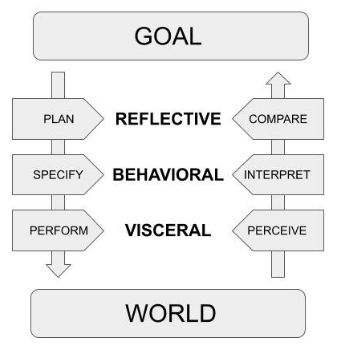

When we interact with an object, we face two “gulfs” of understanding: the Gulf of Execution, (figuring out what an object does and how to use it) and the Gulf of Evaluation (evaluating results after using the object). To cross these gulfs, we use a seven stage action cycle. This action cycle happens unconsciously unless we’re interacting with an unfamiliar or confusing object. Each stage answers a particular question.

- Goal: What result do I want to achieve?

- Plan: What options do I have for achieving my goal?

- Specify: Which of these options will I choose?

- Perform: How do I execute my plan?

- Perceive: What happened when I did that?

- Interpret: What does that result mean?

- Compare: Did I reach my goal?

Let’s use grocery shopping as an example to see the seven steps in action. In that case, they may look something like this:

- Goal: I need to go grocery shopping.

- Plan: Should I drive to the store or take the bus?

- Specify: I think I’ll drive.

- Perform: I’ll follow the usual route to the store instead of a new one.

- Perceive: Everything went smoothly and I’ve parked at the store.

- Interpret: This means I can now go inside and shop.

- Compare: I’ve met my goal of going grocery shopping!

This cycle will play out multiple times for any given action because most behaviors have both an overall goal (like “go grocery shopping”) composed of several subgoals (“start the car”). Determining the overall goal is important because it gives designers a better idea of what users really want. To do this, we use root cause analysis, or continually asking “why?” about a behavior until there is no further answer. The root cause of a behavior might be internal goal-driven (studying for a test) or external event-driven (putting in earplugs in a noisy environment).

Conscious vs. Subconscious Processing

People react to technology in their lives through thoughts and emotions, and good design can capitalize on those reactions. To do that well, designers need an accurate working model of how the brain processes information.

There are two types of cognitive processing: conscious and subconscious. Conscious processing is deliberate: It’s where we compare options, predict possible outcomes, and come up with new ideas. Subconscious processing is automatic and works in generalizations. It’s the home of pattern recognition and snap judgments.

We can think of these differences in terms of three levels of processing: visceral, behavioral, and reflective.

- The visceral level is subconscious and involves our most primitive reflexes, like startling at a loud noise or flinching when something flies towards us unexpectedly. Visceral reactions can have a powerful influence on how users respond to an object. An otherwise well-designed product can fail if it provokes a negative visceral response in the user (like with a sudden, blaring alarm, or an unpleasant odor.)

- The behavioral level is the home of the subconscious process of turning thought into action. It fills the gap between intention (like speaking) and action (moving your lips, tongue, and jaw in specific ways). This level is important in design because it functions based on expectations—if you flip a light switch, you subconsciously expect a light to turn on. If it doesn’t, your ability to process the action is interrupted by bad design.

- The reflective level is where conscious processing happens. This is where we interpret information from the other levels and use those conclusions to make decisions. If a product “rubs us the wrong way” on the visceral level, the reflective level might evaluate that information and consciously decide not to use that product again.

- For example, if an alarm clock keeps perfect time and is easy to use, but the alarm sound itself is so loud and jarring that you wake up each morning thinking the house is on fire, the memory of that visceral response might make you view the interaction negatively and avoid that specific clock (or brand) in the future.

Memory

Memory also impacts our interactions with objects. There are two kinds of knowledge: “knowledge in the head” (memory) and “knowledge in the world," which is anything we don’t have to remember because it’s contained in the environment (like the letters printed on keyboard keys). Putting knowledge into the world frees up space in our memories and makes it easier to use an object.

The knowledge we keep in our heads is only as precise as the environment requires. Most people won’t notice if you change the silhouette on an American penny because we only need to remember the color and size to tell a penny apart from other coins. We’re more likely to notice changes to the portrait on an American dollar bill, because we’re used to relying on that image to help us tell bills apart (since they are identical in size, shape, and color).

Memories can be stored either short- or long-term. Short-term memory is the automatic storage of recent information. We can store about five to seven items in short-term memory at a time, but if we lose focus, those memories quickly disappear. This is important for design: Any design that requires the user to remember something is likely to cause errors.

Long-term memory isn’t limited by time or number of items, but memories are stored subjectively. Meaningful things are easy to remember; arbitrary things are not. To remember arbitrary things, we need to impose our own meaning through mnemonics or approximate mental models. Designers can make this easier for users by making arbitrary information map onto existing mental models (for example, think of the way Apple has kept the location of the power and volume buttons relatively the same with each new version of the iPhone.)

The Error of “Human Error”

Industry professionals estimate that between 75 and 95 percent of industrial accidents are attributed to human error. This number is misleading, since what we think of as “human errors” are more likely outcomes of a system that has been unintentionally designed to create error, rather than prevent it.

Detecting Errors

Errors can be divided into “slips” (errors of doing) and “mistakes” (errors of thinking). Accidentally putting salt instead of sugar in your coffee is a slip—your thinking was correct, but the action went awry. Pressing the wrong button on a new remote control is a mistake—you carried out the action fine, but your thought about the button’s function was wrong.

Most everyday errors are slips, since they happen during the subconscious transition from thinking to doing. Slips happen more frequently to experts than beginners, since beginners are consciously thinking through each step of a task. On the other hand, mistakes are more likely to happen in brand new scenarios where we have no prior experience to pull from, or even familiar scenarios if we misread the situation.

Causes of Error

One major cause of error is that our technology is engineered for “perfect” humans who never lose focus, get tired, forget information, or get interrupted. Unfortunately, these humans don’t exist. Interruptions in particular are a major source of error, especially in high-risk environments like medicine and aviation.

Social and economic pressures also cause error. The larger the system, the more expensive it is to shut down to investigate and fix errors. As a result, people overlook errors and make questionable decisions to save time and money. If conditions line up in a certain way, what starts as a small error can escalate into disastrous consequences.

- Social and economic pressures played a critical role in the Tenerife airport disaster, when a plane taking off before receiving clearance crashed into another plane taxiing down the runway at the wrong time. The first plane had already been delayed, and the captain decided to take off early to get ahead of a heavy fog rolling in, ignoring the objections of the first officer. The crew of the second plane questioned the unusual order from air traffic control to taxi on the runway, but obeyed anyway. Social hierarchy and economic pressure led both crews to make critical mistakes, ultimately costing 583 lives.

Preventing Errors

Good design can minimize errors in many ways. One approach is resilience engineering, which focuses on building robust systems where error is expected and prepared for in advance. There are three main tenets of resilience engineering.

- Consider all the systems involved in product development (including social systems).

- Test under real-life conditions, even if it means shutting down parts of a system.

- Test continuously, not as a means to an end, since situations are always changing.

Constraints

Designers can also use constraints, which limit the ways users can interact with an object. There are four main types of constraints: physical, cultural, semantic, and logical.

Physical constraints are physical qualities of an object that limit the ways it can interact with users or other objects. The shape and size of a key is a physical constraint that determines the types of locks the key can fit into. Childproof caps on medicine bottles are physical constraints that limit the type of users who can open the bottle.

Cultural constraints are the “rules” of society that help us understand how to interact with our environment. For example, when we see a traditional doorknob, we expect that whatever surface it’s attached to is a door that can be opened. This isn’t caused by the design of the doorknob, but by the cultural convention that says “knobs open doors."

When these agreements about how things are done are codified into law or official literature, they become standards. We rely on standards when design alone isn’t enough to make sure everyone knows the “rules” of a situation (for example, the layout of numbers on an analog clock is standardized so that we can read any clock, anywhere in the world).

Although they’re less common, semantic and logical constraints are still important. Semantic constraints dictate whether information is meaningful. This is why we can ignore streetlights while driving, but still notice brake lights—we’ve assigned meaning to brake lights (“stop!”), so we know to pay attention and react.

Logical constraints make use of fundamental logic (like process of elimination) to guide behavior. For example, if you take apart the plumbing beneath a sink drain to fix a leak, then discover an extra part leftover after you’ve reassembled the pipes, you know you’ve done something wrong because, logically, all the parts that came out should have gone back in.

The Design Thinking Process

“Design thinking” is the process of examining a situation to discover the root problem, exploring possible solutions to that problem, testing those solutions, and making improvements based on those tests. This process is iterative, which means it is repeated as many times as necessary, each time with slight improvements based on previous iterations.

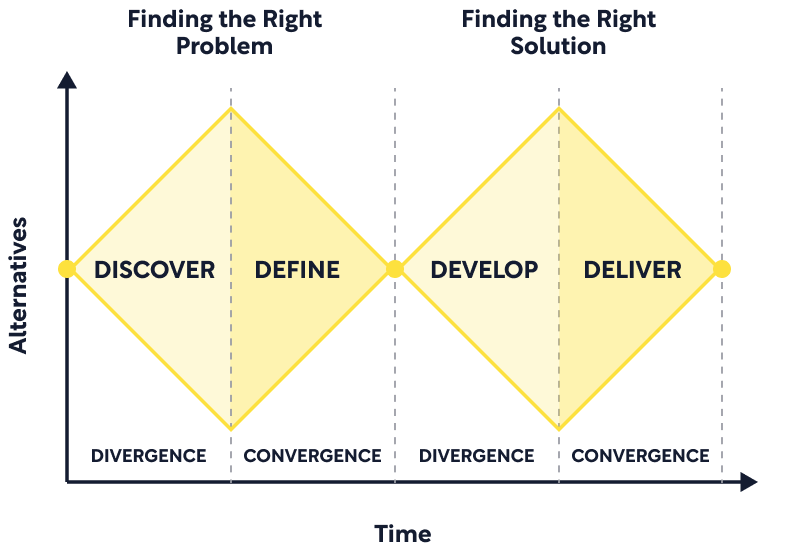

Design thinking involves two tasks: finding the right problem and finding the right solution. Designers are often hired to solve symptoms, but good designers dig deeper to find the underlying problem before coming up with solutions. To do this, designers run through four stages: observation, idea generation, prototyping, and testing. This process is repeated as many times as necessary to develop the final product.

The observation phase involves gathering information on the people who will use the new design. This is different from market research: Designers want to know what people need and how they might use certain products, while marketers want to know which groups of people are most likely to buy the product.

After observation comes the idea generation phase, where designers brainstorm solutions to the problem. The goal is to generate as many ideas as possible without censoring “silly” ideas, since they might spark valuable discussion. Designers will then create prototypes of the most promising ideas using things like sketches and cardboard models.

Once the prototype is refined, the testing phase begins, where members of the target user group are asked to try out the prototype and give their feedback. Designers then repeat the entire process based on the feedback from the first round of testing. The iterative design thinking process emphasizes testing in small batches with refinement in between rather than waiting until the final product and testing with a much larger group.

Design Thinking in the Real World

In reality, the design process often doesn’t live up to the above ideal. Business pressures are the primary culprit here, since a well-designed product will still fail if it’s over budget and past deadlines. Product development team dynamics are also a challenge. The best teams are multidisciplinary, combining unique knowledge from different fields. However, each team member usually thinks their discipline is the most important.

Diversity among users can also impact design. For users with disabilities, designers can turn to a universal design approach. Universal design creates products that are usable by the widest range of people by designing for the highest need, not the average need. Adopting a universal design approach changes how designers choose the types of people and environments to observe as well as the features they focus on most in the prototyping and testing phases.

This approach is “universal” because if a product, environment, or service is designed with disability access in mind, it will typically also be usable for those without disabilities. For example, curb cuts were originally designed for wheelchair users but are also enormously helpful for anyone pushing a stroller or lugging a suitcase.

Technological Innovation

Economic pressures drive innovation. This can take the form of “featuritis," or the tendency to add more and more features to a product to keep up with competitors. These features ultimately degrade the design quality of the original product. Rather than winning over customers with new features, it’s better to do one thing better than anyone else on the market.

Real quality innovation can be either radical or incremental. Radical innovation involves high-risk, game-changing ideas while incremental innovation makes small improvements to existing products over time. The invention of the automobile was radical—all the small improvements that led to cars as we know them today happened incrementally.

The Future of Technology

Rapid technological innovation raises questions about the future of user experience. The way we interact with objects around us will certainly change in response to new technologies, and cultural conventions will change to reflect that. But human needs will remain the same. For example, the keyboard has evolved from mechanical typewriters to computer keyboards to touchscreen versions, but the need to record written information has stayed the same. In other words, human needs won’t change, but the way they’re satisfied will.

Some people fear that the rise of smart technology is making humans less intelligent because we can delegate even the most basic tasks to machines—and if those machines fail, we are totally helpless. It’s true that some traditional skills are becoming obsolete thanks to new technology, but that process ultimately makes us smarter. The energy saved by not having to create a fire every time we need heat or light or rely on long division for simple calculations can be channeled into higher-level pursuits. Our intelligence hasn’t changed, only the tasks we apply it to. The key is in using technology to do the jobs technology can and should do.

Increased Creation and Consumption

Technological innovation has made it easier than ever for anyone with a computer to create and publish new media. While amateur content creation has gotten easier, creating professional content has gotten harder and more expensive. The accessibility of smart technology levels the playing field, but makes it much harder to find quality, fact-checked content.

For manufacturers, new technologies present a different challenge. The need to entice buyers is a fundamental part of business, because a product that doesn’t sell is a failure, no matter how well designed it is. But while services like healthcare and food distribution are self-sustaining (because there will always be a need for them), durable physical goods are not. If everyone who needs a particular product purchases one, there’s no one left to sell it to; If everyone already owns a smartphone, how do you convince them to buy the new and improved model?

One way manufacturers get around this is through planned obsolescence, the practice of designing products that will break down after a certain amount of time and need to be replaced. This creates a cycle of consumption: buy something, use it until it breaks, throw it away, and buy another. While this cycle is good for business, the waste it generates is horrible for the environment. Thankfully, the combination of new technologies and a growing cultural awareness of sustainability issues is creating a new paradigm. The future of technology involves products designed with both the user and the environment in mind.

Introduction

Every man-made object, environment, or program in our world is designed. From doorknobs to smartphone apps, design pervades our lives to the point that it often becomes completely invisible. When we struggle with one of these designs, we assume that our difficulties are our own fault, or that we’re just not smart enough to figure it out. But that blame is misplaced. More often than not, the true culprit in cases of “human error” is actually bad design.

Traditionally, design is described in terms of form and function—how an object looks, and how it works. But this description totally ignores the question of how users interact with the design, and that oversight is a common reason why products fail. An object can be extremely useful and visually beautiful, but if the average user can’t figure out how to work it, it’s ultimately useless.

The first edition of this book was released in 1988 under the title The Psychology of Everyday Things. It was considered controversial at the time, and engineers and designers resisted the idea that understanding psychology was important for design. This revised edition was released in 2013 and contains updated examples and a few added principles, but the main ideas are the same. The author, Don Norman, is both a cognitive psychologist and an engineer. He was one of the first people to formally point out the overlap between the two fields, and to highlight the importance of considering user experience.

- (Shortform note: The word “technology” appears frequently in both the book and this summary. In this context, it refers to any object created by humans, not just those with computer systems. So a smartphone is an example of technology, but so is a basketball.)

User interaction is a two-way relationship between a person and an object. In the first two chapters, we’ll explore the ways that relationship is impacted both by the design of the object and by the user’s thoughts and emotions. Chapter 3 explores the role of human memory for product design, while Chapters 4 and 5 discuss specific ways that good design can guide user experience and prevent dangerous errors. In the final two chapters, we’ll learn more about the ideal product design process and the way real-world pressures force designers to compromise that ideal. The summary concludes with a look to the future of user interaction in an increasingly digital world.

Chapter 1: How the Design of Physical Objects Shapes Our Lives

This chapter lays the foundation for the rest of the book by illustrating how the design of physical objects has a much bigger impact on our lives than most people assume. Poorly designed objects can cause frustration, time delays, and even injury.

What Does “Good Design” Look Like?

At its core, design is any human influence on the physical world. We tend to think of this in terms of buildings, fashion, or products, but design is much broader than just a few fields. Every object or environment that has been created or modified by humans is designed. This applies to everything from ancient architectural marvels to the layout of clothes in your closet.

As technology evolves, new fields of design pop up to focus on specific problems. User interaction is important in every subfield, but it’s most often talked about in industrial, interaction, and experience design.

- Industrial design focuses on the creation and development of physical objects, with a particular focus on function, aesthetics, and value.

- Interaction design focuses on the interface between user and object, usually in a digital context (think website design).

- Experience design focuses on the emotional experience of using a product, service, or environment.

Each of these fields focuses on redefining “good” design in terms of user experience. Two important principles guiding this definition are discoverability and understanding. Discoverability refers to whether a user can figure out what an object is and how to use it without considerable effort. Discoverability answers the question, “How do I use this thing?”

- For example, the style of a door handle and the location of the hinges make it easy to discern what that object is and how it works—it is easily discoverable. But a modern or industrial door with no visible hardware can be almost impossible to figure out.

Understanding, in this context, refers to the user’s ability to make meaning out of the discoverable features of the object. Understanding answers the questions, “What is this, and why do I want to use it in the first place?” On a normal door, handles and hardware indicate where to push or pull—but they also help us understand what this object is (a door) and what it’s used for (opening and closing). In other words, good design has to consider not only the form and function of a product, but also the experience of interacting with that product.

Why Good Designers Make Bad Products

If interactions are such a crucial part of good design, why do designers so often get it so wrong? There are two primary reasons for this.

- Traditionally, the objects and technology we interact with on a daily basis are created by engineers, who are typically logical thinkers who have been trained to focus only on function. Their goal is to create a superior product—and because they understand how to use that product, they often assume others will understand, too. In other words, engineers create products under the false assumption that people perform like machines—they always act logically, aren’t influenced by emotion, and rarely make errors.

- Engineers and designers typically don’t have the ultimate say in all decisions about a product. They’re limited by the budget set by the company or client, by the logistical capabilities of the manufacturer, and by the needs of the marketing team. The final product must be not only well-designed, but also possible to produce (at scale and within budget) and easy to sell.

Human-Centered Design

One solution to this problem is human-centered design. Human-centered design is not a subfield like industrial or interaction design. It is a design philosophy that can be applied in any design specialization. The goal of human-centered design is to flip the traditional design process on its head by focusing on human needs and behaviors first, and designing products to fit those needs, rather than designing a product and hoping that users figure out how to use it.

Let’s use a simple fork as an example. The traditional design process would most likely begin with a designer thinking, “I’d like to design a new kind of fork.” She would then brainstorm new versions of the fork, create sketches and prototypes of those ideas, and tweak those prototypes until she was happy with the finished product.

Instead of a concrete product idea, a human-centered design approach would begin with a set of questions: “What tools do people use to eat food? What problems do they run into with those tools? How can they be improved?” The designer would then observe different types of people eating different types of food, conduct interviews with people about their preferred cutlery, and research the history of eating utensils. She would identify the main problems in the current technology and only then begin to brainstorm ideas for how to address those needs.

The Speed of Technological Development

Modern technology evolves exponentially faster than ever before. While previous generations may have had one phone per household that was only updated after decades of use, we now have personal cell phones, with new and improved models being released twice a year. In contrast, design practices and principles evolve much slower. This means that technology is outpacing our ability to effectively interact with it.

This creates a paradox: Technology simplifies our lives, but the more advanced technology becomes, the more difficult it is to learn and operate, which adds complication. We see this when users buy a new, state-of-the-art television, only to be overwhelmed by the endless remote control buttons and ultimately only learning to use one or two features.

How Do We Know How to Use an Object?

There are six design principles that influence how we interact with an object: affordances, signifiers, mapping, feedback, models, and the system image. Each of these principles may be more or less relevant than the others for a specific object, but they are always present to some degree.

Affordances

The term “affordance” refers to the relationship between an object and a user. Affordances are the finite number of ways in which a user can possibly interact with a given object. They answer the question, “What is this thing for?”

For example, think of a chair. Chairs typically have a flat surface, which we intuitively recognize as an indicator of support, either for a person or an object. In other words, the look of a chair suggests that it is for sitting on, or possibly resting objects on.

Some affordances are obvious from the appearance of the object itself, like the flat surface of the chair. Other affordances may be less intuitive or even completely hidden. For example, if the chair were light enough (and the user strong enough), throwing across the room may be another affordance of the chair. Similarly, if the chair had a well-hidden secret compartment, hiding objects would be an affordance of the chair, regardless of whether the user discovers the drawer or not. The key is that it is a possible interaction.

Affordances can be deliberately designed—by nature, a chair is designed for sitting, a coat rack is designed to hold coats, and a stair railing is designed to prevent falls. But affordances can also arise completely by accident. The chair could also be used as a step stool, the coat rack as a child’s climbing gym, or the railing as a bookshelf. These aren’t the uses the designer had in mind, but they are equally valid affordances.

However, affordances are not merely properties of the object itself. Instead, they describe the relationship between the object and the user. This means that without a user to interact with, an object has no affordances. It also means that affordances for the same object can vary between different users. Think back to the chair example. If the user is a toddler, crawling under might be another affordance of the chair. But if the user is a large adult, crawling under is not a possible interaction between the user and the chair. The chair remains the same, but the affordance changes based on the different characteristics of the user.

Signifiers

The idea of hidden affordances highlights the need for signifiers. A signifier is a signal that draws the user’s attention to an affordance they may not have intuitively discovered. In a digital context, a “click here” button or flashing icon are possible signifiers. In the example below, the “push” sign is a signifier. It contrasts with the perceived affordance of the handles (pulling), which may cause confusion.

Affordances and signifiers are easy to mix up, and even seasoned designers sometimes use one word when they really mean the other. The key difference is that affordances describe the possible interactions between object and user, whereas signifiers are a way of advertising those affordances (for example, if you take the “push” sign off a door, the door will still open when pushed. You've removed the signifier, but not the affordance). For designers, signifiers are more important than affordances. The most sophisticated technology is pretty useless if a user can’t find the ‘on’ button.

Mapping

For some simple objects or interfaces, signifiers alone will give the user enough information to use the object successfully. However, more complex objects might also require the use of mapping in order to be usable. Mapping uses the position of two objects to communicate the relationship between them. It’s the simplest way to show the user which controls correspond to which affordances.

For example, picture the knobs on a stovetop. How do you know which knob operates which burner? If the stove is well-designed, the arrangement of the knobs will map onto the arrangement of the burners (typically a square). In that case, if you want to turn on the bottom left burner, you intuitively reach for the bottom left knob.

This stovetop example is notorious among designers because effective mapping of knobs to burners is so rare. When most of us picture a stovetop and its controls, we picture the burners arranged in a square, but the knobs arranged in a line. In this setup, the user has to invest far more time and mental energy to figure out which knob controls which burner, and may have to resort to trial and error.

Feedback

The next clue for interacting with an object is feedback. If you’ve followed signifiers to an affordance and used mapping to figure out which control you need to use, how do you know whether you got it right? Feedback is a sensory signal that alerts the user that what they’re doing to an object is having some effect. Information that results from a user’s action is called “feedback”; information that shows a user how to act in the first place is called “feedforward." Feedforward guides users through the execution phase, while feedback guides them through evaluation.

Our sensory systems automatically provide basic feedback about our environment through all of our senses. We automatically process the look, feel, sound, and scent of objects around us. However, for more complex objects, feedback signals may not be automatic. In that case, designers can deliberately add in sources of feedback (like a small green bulb that lights up when a machine is “on”).

Feedback tells us when something works, but more importantly, feedback tells us when an object is not working how we need it to. In a car, a dashboard alert light or the sound of squeaking brakes are both sources of feedback that let us know something is wrong. Without these signals, we might not recognize a major problem until it’s too late. This is especially important when the object’s function is hidden from view.

But too much feedback can also cause problems. Think of a GPS system that announces every single cross street. By the time you reach the street you’re looking for, you’ve long tuned out the constant updates and are likely to miss it. The same is true of smoke alarms that can’t be easily turned off—once you’re aware of the emergency, the constant, ear-splitting beeping makes it more difficult to react appropriately.

Even the smallest decisions on the design of feedback can have enormous consequences. One notorious example of this is the Three Mile Island incident, in which a nuclear reactor at a plant in Pennsylvania suffered a partial meltdown and very nearly resulted in a disastrous radiation leak. The cause of the incident was determined to be human error, but further investigation traced the root of the problem to a single indicator light. Counterintuitively, the light would turn on when an important coolant valve was closed, and turn off when the valve was open. The confusion caused by this tiny design decision set off a chain reaction that allowed a small issue to escalate into a full-scale nuclear incident.

Clearly, feedback is important, and too much or too little can cause problems. But the type of feedback and the way it’s presented is also crucial. A car’s turn signal flashes on the side of the car that matches the direction the driver intends to turn. If the opposite side flashes, or both at the same time, that gives you no useful information about what the car in front of you is about to do.

Models

So far, we know that affordances tell us what an object is for, signifiers tell us what and where those affordances are, mapping helps us find the right controls to engage with those affordances, and feedback tells us whether everything is working right. All of this information produces a model (also called a mental model or conceptual model). A model is a mental picture of an object and how it works.

Example: Refrigerator Controls

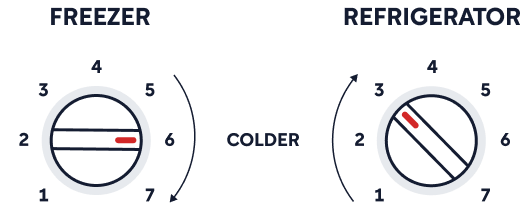

The design of an object itself suggests a certain conceptual model. For example, some refrigerators have two temperature control knobs, one labeled “freezer” and the other “refrigerator." These are two separate knobs, which implies that the temperature of each section is controlled completely independently.

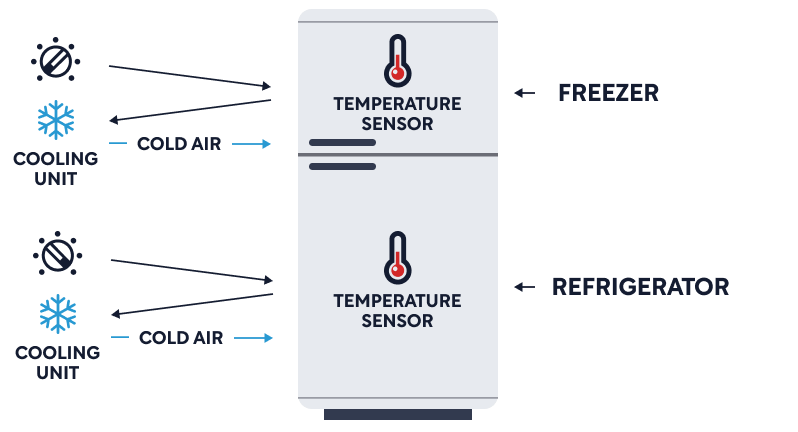

Seeing this, you’d probably assume that the refrigerator and the freezer each contain an independent temperature sensor that controls an independent cooling mechanism. This mental model would look something like this:

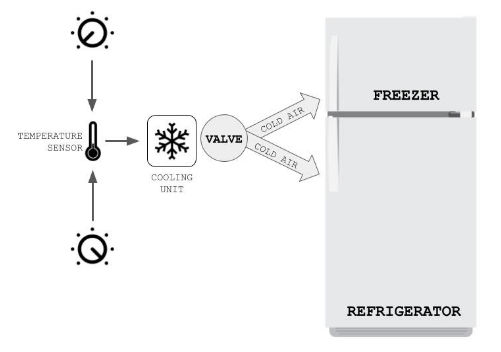

However, in reality, this fridge contains only one cooling unit. The knobs control a central valve that adjusts how much cold air is blown into each section of the refrigerator. This means that adjusting one knob will change the temperature in both the freezer and the refrigerator. So, if the freezer were too cold, adjusting the freezer knob would warm that section up, but would also funnel more cold air into the refrigerator below, cooling that section even further. This model looks more like this:

In this example, an inaccurate mental model of the cooling system is likely to result in frustration (and possibly some spoiled food). But it’s not the user’s fault that their model is inaccurate—the dual knobs would lead almost anyone to the same faulty conclusion. The design itself is the problem here, not the user’s understanding of it.

The System Image

Signifiers, mapping, feedback, and any obvious affordances help us understand how to use an object. But we also have access to even more information that can guide our interactions with that object, like user manuals, product websites, and our own past experience with similar objects. Taken together, the sum total of the information we have about an object is the system image. The system image is ultimately what determines how a user interacts with an object. For designers, this means the object must speak for itself—without the designer in the room to teach them, users still need to be able to figure out how to use it.

The system image gives us another way to think about why designers sometimes make confusing or frustrating things. Engineers and designers expect that the user’s mental model of the object will perfectly match their own mental model. But a designer may have spent months working on a particular design, whereas the user has zero previous experience with that object. The system image is the only way designers can communicate their model of how something works to the user.

Exercise: Reexamine Everyday Objects

We typically don’t think about the design of things around us unless they present a problem. This exercise will help you think critically about items you use every day.

This chapter looks at how the design of common items like doors, chairs, and kitchen appliances can influence how we interact with them. Take a moment to look around your environment right now. Choose one object within arm’s reach. Imagine showing the object to a friend who had never seen anything like it before. Do you think they would be able to figure out what it does? Would they be able to use it correctly?

What signifiers or perceivable affordances does the object have that would help your friend figure out its purpose? (Remember: Signifiers are signals that tell us where or how to interact with something, such as a “push” sign on a door. Perceivable affordances are obvious ways we can interact with an object, like a flat surface for supporting weight, or a hollow vessel for holding liquid.)

If you were to redesign this object to make it easier to use and understand, what changes would you make? (For example, adding signifiers, or rearranging controls to naturally map onto the object.) How would these changes help?

Now that you’re thinking like a designer, look around the room again. What other obvious signifiers do you see on other objects or appliances?

Chapter 2.1: Conscious and Subconscious Processing

This chapter gives an overview of the conscious and subconscious mental processes that determine how we perceive, interpret, and respond to objects in our environment. Traditionally, studying human cognition, emotion, and behavior is the domain of psychologists, and many designers underestimate the importance of understanding human behavior. They assume that their experience with their own thoughts and emotions is more than enough to predict how other people will think and feel in a given situation. But because the bulk of human cognitive processing happens on an unconscious level, our own experiences of our conscious thoughts only show part of the picture.

Every designed object or system will ultimately be used by people. A product that is technically perfectly engineered but is confusing to use is ultimately a failure. In other words, understanding the thoughts and emotions that underlie our interactions with technology has important implications for design.

Evaluating Behavior With The Seven Stages of Action

When we interact with a new object, we have two problems to solve: “How do I use this?” and “Did that work?” Author Don Norman calls these “the gulf of execution” and “the gulf of evaluation."

- The Gulf of Execution refers to the process of figuring out what an object does and how to use it. This can happen either before using the object or while trying it out. Affordances, signifiers, and mapping are tools designers use to help users bridge this gulf.

- The Gulf of Evaluation occurs after using an object and refers to the process of evaluating what the device did and whether that action matched our goals. Feedback and accurate mental models are the most helpful tools for bridging this gulf.

The Seven Stages of Action

The gulfs above are important because they represent the two components of an action: execution and evaluation. We can break these down even further for a total of seven stages that take us from the impetus of an action all the way through to successful completion. (If seven distinct steps seems excessive, remember that for most actions in our daily lives, these stages play out completely unconsciously. We only become aware of them for tasks that are unfamiliar or confusing.)

A great example of this is driving a car. Experienced drivers make turns and merge into traffic without much conscious thought. The stages of action have become automatic through repetition, only requiring thought when something novel comes up, like construction blocking a particular road. New drivers, on the other hand, consciously think through every step. Where an experienced driver might think “I need to turn left," a new driver would think “I need to slow down, check my mirrors, check for oncoming traffic, turn the wheel, hit the gas pedal with just the right amount of force, then turn the wheel back again.”

The seven stages of action are: goal, plan, specify, perform, perceive, interpret, compare. These steps carry the user across the gulfs of both execution and evaluation. The first stage, “goal," sets the standard that will be used later to determine if the action was successful. The next three stages (plan, specify, perform) bridge the Gulf of Execution, while the final three stages (perceive, interpret, compare) bridge the Gulf of Evaluation. Each of these stages answers a particular question:

- Goal: What result do I want to achieve?

- Plan: What options do I have for achieving my goal?

- Specify: Which of these options will I choose?

- Perform: How do I execute my plan?

- Perceive: What happened when I did that?

- Interpret: What does that result mean?

- Compare: Did I reach my goal?

Let’s use the driving example again to see the seven steps in action. In that case, they may look something like this:

- Goal: I need to go grocery shopping.

- Plan: Should I drive to the store or take the bus?

- Specify: I think I’ll drive.

- Perform: I’ll follow the usual route to the store instead of a new one.

- Perceive: Everything went smoothly and I’ve parked at the store.

- Interpret: This means I can now go inside and shop.

- Compare: I’ve met my goal of going grocery shopping!

In the example above, the action was successful in achieving the goal. However, the goal of going grocery shopping is part of an overall system that includes both larger and smaller goals. For example, if I’m making a particular recipe but don’t have an ingredient I need, going grocery shopping would become a subgoal of my overall goal of making that recipe. Grocery shopping itself would have multiple subgoals: locating each ingredient in the store, loading the groceries back into the car, and so on.

Conscious vs. Subconscious Processing

This section gives an overview of the different ways people cognitively process thoughts and emotions. This subject is almost never included in traditional design or engineering training, but it’s important to understand because it is the core of user experience. People react to technology in their lives through thoughts and emotions, and good design can capitalize on those reactions. To do that well, designers need an accurate working model of how the human brain processes information.

Generally speaking, people are only consciously aware of a small portion of their thoughts and emotions. The rest of our opinions, decisions, emotions, and reactions happen without any conscious input. When we learn a new skill, we need conscious focus at first, but once we fully master the skill and make it a frequent habit, performing requires less and less conscious effort until it is fully subconscious. The process of mastering a skill to the point that it can be executed subconsciously is called “overlearning." Think of the new driver compared to the experienced driver—the new driver is actively concentrating, while the experienced driver can safely carry on a conversation or sing along to the radio.

(Overlearning applies to complex skills like driving, walking, and learning a language, but can also apply to factual information. For example, if you’re filling out a form and are asked for your phone number, you’ll most likely be able to answer without much effort. But if you’re asked for the address of the second house you ever lived in, it will take you much longer to come up with the answer.)

Conscious and subconscious processing each have important strengths. Conscious processing is what sets humans apart from animals. It allows us to compare options, predict possible outcomes, and come up with new ideas. Conscious processing happens when we deliberately choose to learn or consider something new.

Subconscious processing, on the other hand, happens automatically. This is how we make connections between seemingly unrelated events in our lives, or jump to premature conclusions based on our past experiences. Subconscious processing works in generalizations—it automatically predicts that new experiences will follow the same pattern as similar previous experiences.

Both conscious and subconscious processing are tied to emotions. Emotions trigger biochemical reactions that prompt the brain to focus more on one type of processing than the other. Generally speaking, negative emotions like fear shut down conscious processing and divert resources to subconscious survival instincts. On the other hand, positive, calm emotions allow for the use of conscious processes like creativity, since the brain is not responding to a perceived threat. This is why we react to strong feelings of fear with a fight, flight, or freeze response. All three of these possible responses are completely subconscious—we can’t consciously choose one, and we have very little conscious control over them once they appear.

The strength of an emotion also matters: Strong emotions bias the brain toward subconscious processes, while more mild feelings leave room for conscious thought to intervene.

Three Levels of Processing

(Shortform note: This section gives a basic overview of Norman’s way of thinking about the ways humans process thoughts and emotions. For a more detailed look at his thoughts on the subject, see his book Emotional Design.)

The study of human cognition and emotion is an extremely complex area of neuroscience. A simplified model of this process is helpful for understanding the basic ideas and their implications for design. This model divides emotional and cognitive processing into three distinct levels: visceral, behavioral, and reflective. Each of these levels has important implications for design, so understanding them is crucial in order to design technology that is easy to use and to enjoy.

The Visceral Level

The visceral level involves our most primitive reflexes, like startling at a loud noise or flinching when something flies towards us unexpectedly. This happens in the lowest part of our brains, the same area responsible for basic functions like breathing and balancing upright.

The visceral level controls the fight, flight, and freeze responses by signaling the muscles and heart to behave in particular ways. For example, the bodily signature of a flight response is a racing heartbeat and increased muscle tension. This happens in response to a fearful stimulus, but the process can also work in the opposite direction. If your heart is racing and your muscles are tense from exertion or excitement, you might mistakenly perceive that combination of sensations as a flight response, and become fearful as a result. Our emotions and perceptions influence our bodies, and vice versa.

Processing at the visceral level is completely subconscious. It can’t be influenced by learning, except for basic processes like adaptation (for example, if you work in an environment with frequent bursts of loud noise, your automatic startle response to that stimulus may decrease over time as your brain learns that sudden loud noises don’t always mean danger).

This level of processing is especially important for designers to understand. Visceral reactions can have a powerful influence on how users respond to an object. An otherwise well-designed product can fail if it provokes a negative visceral response in the user (like with a sudden, blaring alarm, or an unpleasant odor.)

The Behavioral Level

The behavioral level also primarily deals with subconscious processing. This might seem counterintuitive, since we typically choose our behaviors and can observe them consciously. But the behavioral level of processing is not concerned with why we act the way we do, but how.

For example, if you want to speak, you have to control your lips, tongue, and jaw in very specific ways to produce the right sounds. You might consciously choose what you want to say, but most of us don’t actively will our mouths to make certain shapes. The same applies to wiggling your fingers or opening a drawer-- we’re not conscious of the neurological processes involved in those actions. We decide what to do, and our brains subconsciously forward the message to the correct body parts.

(Unlike the visceral level, responses at the behavioral level can be learned and changed. This is where overlearning comes in—when we practice something over and over until it becomes a habit, we’ve moved that skill from a conscious level to the subconscious behavioral level. Now, when the associated trigger pops up, we carry out that action without any conscious thought. Overlearning is an important factor in understanding human error, which is covered more thoroughly in Chapter 5.)

Behavioral processing also has implications for design. By definition, behavioral responses have a specific expectation attached. If you open your laptop and press the power button, you expect it to turn on. When you turn a doorknob and push, you expect the door to open. These expectations are crucial for designers to understand because they have such a huge impact on emotional responses. When our expectations are not met, we typically experience frustration or disappointment; when they are met or exceeded, we experience satisfaction and pleasure. In turn, these emotions strongly influence how we think and feel about the experience of interacting with a given object.

We often make these associations without realizing it. If your laptop reliably powers on each time you expect it to, you learn to associate the laptop with satisfaction and confirmed expectations. If the door frequently doesn’t open when you expect it to (perhaps because it lacks the necessary signifiers), you associate that type of door with frustration and annoyance.

The most important design tool for managing user expectations is feedback. If an experience defies our expectations, we might feel helpless or confused about how to proceed, ultimately influencing how we think and feel about the experience. Feedback mitigates this damage by explaining what went wrong, allowing users to regain a sense of control. Even better, if feedback gives us information about the problem and how to fix it, we’re much less likely to experience feelings of helplessness or confusion.

The Reflective Level

The reflective level is the level of conscious processing. Where visceral and behavioral processing happens instinctively and immediately, reflective processing is deliberate and therefore much slower. The reflective level allows us to brainstorm, consider alternatives, exercise logic and creativity, examine a new idea, and, as the name implies, reflect back on past experiences.

Emotion plays an important role at this level as well. Where the visceral and behavioral levels deal with subconscious, automatic emotional responses, the reflective level provokes emotional responses based on our own interpretation of an experience. For example, while fear is an automatic visceral response, anxiety about possible future events is a reflective response. Anxiety arises from our ability to predict possible futures based on current trends. But this is the same process that underlies feelings like excitement and anticipation. Our own interpretation of our predictions decides which of these emotions we experience.

Another example of this effect is the difference between guilt and pride. In order for us to feel either of these emotions, we have to believe we’re directly responsible for the outcome of a situation. If we judge the outcome of that situation positively, we’re more likely to feel pride. If we judge the outcome negatively, we’re more likely to feel guilt.

This kind of reflective processing has a strong impact on how we think about design. The act of reflecting involves synthesizing information from the other two levels into a cohesive memory of an experience. While visceral and behavioral processing is concerned only with the present, reflective processing looks back at the past and uses that information to make predictions about the future.

In a practical context, this means that memory is often the most important factor in determining how a user feels about the experience of interacting with a given object. If the object provoked pleasant visceral reactions and met our expectations, we’ll remember the experience positively. In cases where visceral and behavioral reactions conflict, reflective processing determines which of these factors we give more weight to and ultimately decides whether we remember the experience as positive or negative.

- For example, if an alarm clock keeps perfect time and is easy to use, but the alarm sound itself is so loud and jarring that you wake up each morning thinking the house is on fire, the memory of that visceral response might make you view the interaction negatively and avoid that specific clock (or brand) in the future.

Optimizing the Three Levels

The most successful designs address issues at all three of these levels, leaving users with a pleasant memory of the experience and a positive view of the design. Traditionally, designers, artists, and architects are trained to focus on the visceral level, creating aesthetically beautiful projects that provoke an immediate positive response. Engineers, on the other hand, focus on the reflective level, creating projects that function based on logic and higher reasoning.

This is one reason common objects are so often confusing or disappointing to use. The ultramodern, solid glass door described in Chapter 1 might provoke a positive visceral response, but the lack of a clear, logical way to interact with it creates confusion at the reflective level. At the other extreme, medical equipment is often designed purely to perform a specific function. These machines might be incredibly technologically sophisticated, but the confusing or sterile aesthetic can trigger a visceral fear response in patients.

The three levels of processing also map onto the seven stages of action. The second and last of the seven stages (“plan” and “compare”) happen at the reflective level, as they involve consciously setting goals and evaluating results. The “specify” and “interpret” stages happen at the behavioral level, involving a mix of conscious and unconscious processing. The “perform” and “perceive” stages happen immediately before and after the action itself, and are processed subconsciously on the visceral level.

Example: Flow States

To understand the importance of this overlap, let’s look at the concept of flow. The term “flow,” coined by psychologist Mihaly Csikszentmihalyi, refers to a cognitive and emotional state in which a person is completely absorbed in an activity. When in a state of flow, people are so engrossed in an activity that they completely shut out the outside environment and often lose track of time.

Flow states are created at the behavioral level of processing. Remember that the behavioral level involves subconscious expectations—we expect a certain outcome to follow a certain action, and when that doesn’t happen, we tend to get frustrated. People are most likely to be in flow when the task they’re working on is just slightly above their skill level.

When an overly easy task satisfies our expectations immediately, with very little effort, the lack of challenge leaves us feeling bored. On the other hand, an overly difficult task is likely to be so overwhelming that our expectations are repeatedly unmet, so we get frustrated and give up. Tasks that trigger flow states strike the perfect balance between ease and frustration (or between met and unmet expectations). The tension between these two emotions powerfully captures our attention and pulls us into a state of flow.

Although flow is a personal experience and no one design can trigger a flow state for every single user, it’s still a powerful force in shaping user experience. Users in a state of flow spend hours at a time engaging with a product and will associate it with a feeling of satisfaction and enjoyment. Those positive experiences are likely to create happy, loyal customers. In other words, helping users get into a state of flow is good for the bottom line.

So, what does this have to do with design? Since the design of technology has a direct impact on how users engage with it, changing certain design factors can maximize the chance of creating a specific internal response for the user, including a state of flow. To do this successfully, we need to understand both the three levels of processing and the seven stages of action.

- First, we need to know which of the three levels is responsible for processing the desired emotion (in this case, the fact that being in flow is determined by expectations tells us we are dealing with the behavioral level).

- Next, we need to know which of the stages of action are involved (in this case, “specify” and “interpret”), since these stages tell us what specific activities influence that level of processing. In other words, understanding the levels of processing tells us where design can intervene, and understanding the seven stages of action tells us how.

Exercise: Break Down an Ordinary Action

The seven stages of action happen automatically for easy, routine tasks, but thinking through them consciously is a helpful tool for evaluating design. Let’s practice this now.

Assume that your goal for today was to sit down and read this summary (congratulations, you’ve already succeeded!). The next stages are plan and specify, which help us bridge the gulf of execution. (Remember, the plan stage is where we consider all possible options, and the specify stage is where we select one of those options to try.) List three ways you could have accomplished the goal of reading this summary. Which one did you act on?

Since you’re reading this, we know you met your goal—the gulf of evaluation has been bridged. Now let’s apply this process to a task you haven’t completed yet. Think of a small goal you’d like to accomplish by the end of today. This could be as simple as making lunch, or slightly more involved, like finishing a small work project. What is your goal?

List three different possible ways you could accomplish your small goal. Which of these options will you choose?

Once you act on your plan, you’ll need to bridge a new gap: the gulf of evaluation. How will you know when you’ve achieved your goal? (For example, if your goal was to eat lunch, the feeling of no longer being hungry might be a way to measure success.)

How did it feel to break down a simple task into such tiny steps? Were any of the steps more difficult to identify than others?

Chapter 2.2: Making Sense of Our Own Behavior

We know that behavior can be either event-driven or goal-driven, and that it can be broken down into seven stages of action. But what happens when something goes wrong? How do we explain what happened?

For designers, understanding the way users think about their interactions with technology is important for creating a positive user experience. It is not enough to know how something works on a technical level—we need to understand how the user thinks the object works, and how they explain what happened if something goes wrong, since these are important factors in determining how people respond to technology. For designers and non-designers alike, understanding the biases that shape our own stories helps us make sense of our encounters with bad design.

Causes of Behavior

To understand the way people think about their interactions with technology, we need to distinguish between a user’s overarching goal and the smaller subgoals and actions that lead up to it. Norman quotes Harvard Business School professor Theodore Levitt as an example, who said, “People don’t want to buy a quarter-inch drill. They want a quarter-inch hole!” However, it’s unlikely that anyone actually wants a quarter-inch hole in their wall just for fun. Instead, drilling a hole is most likely a subgoal leading up to a larger goal of mounting something on the wall.

Determining the overall goal of a behavior is important because it gives designers a better idea of what users really want. If you’re designing in response to someone buying a drill, you’ll keep making new kinds of drills. If you’re designing in response to someone wanting to hang a shelf, you might come up with a new adhesive that allows the user to mount shelves directly on their wall, without drilling holes. You’ve addressed their real need and simplified the process of meeting it.

Root Cause Analysis

To find the original, overarching goal of a behavior, we use a process called root cause analysis. Essentially, we keep asking “why?” about a behavior until there is no further answer. In the drill example, the process of root cause analysis would start with asking, “Why does this person want to buy a drill?," followed by “Why do they need to put a hole in the wall?” until we reached the conclusion that “They want to hang up a shelf.” But we could push this even further by asking why they want to hang a shelf in the first place. Do they have too many books? Are they running out of floor space? Are the walls empty and boring? This gives designers more intervention points to come up with solutions to meet users’ needs.

One of the lessons of root cause analysis is that every action has either an external or internal cause. When an internal goal causes a certain action, we call this goal-driven behavior. When an outside event or a condition of our environment causes an action, we call this event-driven behavior. Event-driven behaviors are often opportunistic action, or behavior that arises in response to unexpected events.

- For example, if your goal is to do well on an academic exam, the action of studying would be a goal-driven behavior. If you’re trying to study in a noisy environment and reach for a pair of earplugs, that would be an event-driven behavior.

The example above shows how event-driven and goal-driven behavior can be intertwined, since the event-driven behavior (putting in earplugs) only occurs as part of the goal-driven behavior (studying). Designers need to be aware of the differences between event-driven and goal-driven behaviors in order to design for the user’s actual needs. In the studying example, focusing on designing better earplugs focuses only on the external factors. Redesigning the entire environment to be more conducive to the internal goal of studying would address both the internal and external causes, ultimately creating an even better user experience overall.

The Role of Storytelling in User Experience

Root cause analysis helps us make sense of other people’s behavior. To understand our own behavior, we turn to stories. Humans are born storytellers. When we’re faced with a jumble of information, our natural instinct is to organize it into a story that explains cause and effect. Stories help us make sense of our world and our place in it.

Reorganizing information into a cohesive story is usually a subconscious process. We operate under the assumption that there must be an underlying pattern connecting different pieces of information in a way that makes sense, regardless of whether such a pattern actually exists. In practice, this means that humans are really good at coming up with stories to explain our experiences, but not so good at determining whether those stories are actually true.

When it comes to understanding our physical environment, these stories take the form of conceptual models. We create a mental story to explain how something works, connecting whatever bits of evidence we have about that object into cause and effect patterns that make sense to us (but may be totally false).

A common source of false conceptual models is thermostats. Because a thermostat is such a small peek into a complex heating and cooling system, it gives us very few clues as to how it actually works. All we know is that if we’re too cold, we press a few buttons on the thermostat and the room eventually gets warmer. All the steps between “press buttons” and “feel warmer” are hidden and left to the imagination. This leads people to assume the thermostat controls a valve that opens a certain amount based on the setting, and that setting it higher will warm the room faster. In reality, most thermostats are a simple on/off switch, so setting a higher temperature has no effect on how fast the room warms up.

Why Do We Blame Ourselves?

Faulty conceptual models often lead us to blame ourselves when an object doesn’t meet our expectations. If you push a door and it doesn’t open, you push again, but harder. You assume your action was flawed somehow—not that the door itself was poorly designed.

The tendency to blame ourselves when technology fails us is interesting because it is the exact opposite of our default pattern for assigning blame. Normally, when we perform poorly, we blame our environment (perhaps the sun was in our eyes, or the dog ate our homework). But when we perform well, we attribute it to our innate qualities, not the environment.

When we look at other people, this effect is reversed: we assume their successes are products of their environment, but their failures are due to their personal faults. (Shortform note: In psychology texts, this tendency is referred to as the “fundamental attribution error.” To learn more about attribution error and other cognitive biases, read our summary of Thinking, Fast and Slow.)

As new technologies pop up in every corner of our lives, we’re less and less likely to admit to struggling with them, especially when it appears that “everyone else understands this.” In reality, the opposite is true—when it comes to technology, our struggles are more likely due to design, not our own inadequacy. In other words, most people are probably experiencing the same difficulties, whether or not they speak up about it.

The Value of Failure

So, why are we willing to take the blame for failed interactions with technology, but not for our failures in general? One possibility is learned helplessness: the belief that you are doomed to fail in a given situation because you’ve experienced similar failures in the past. A history of repeated failures with a specific experience makes us assume that success is impossible, so we may as well stop trying. So, an encounter with even one or two overly confusing pieces of technology can make us conclude that we’re just not good with technology in general.

We can reframe these experiences using positive psychology. Positive psychology is a subfield of psychology that focuses on people’s strengths and positive emotions instead of their struggles. In this case, positive psychology requires a perspective shift. Instead of seeing repeated failures as evidence that we’re simply not skilled enough, we can actively choose to see failures as learning experiences. For example, if we struggle with a confusing computer program, we might put in the effort to troubleshoot the problem and ultimately end up with a much more thorough understanding of the program than if we’d succeeded on the first try.

Scientists use this practice every day. When an experiment fails, they troubleshoot, find the problem, and try the experiment again. The failure isn’t a bad omen—provides important information that ultimately creates results.

Human Errors Are Really System Errors

People make mistakes. This is a universal truth. But the technology around us often requires us to be perfect—to remember information accurately, never be distracted, and react in the same way every time.

In law, the idea of “human error” is accepted as a valid explanation for tragic outcomes. In reality, these errors are rarely “human," but instead a fault of the system. If a piece of technology is designed without regard to human behavior and cognition, errors are practically guaranteed. Who is responsible for those errors?

Think back to the Three Mile Island incident from Chapter 1. One person misunderstanding an indicator light caused a massive nuclear incident. But why was the control system of a nuclear reactor set up in a way that made it possible for one small mistake to escalate into tragedy? The system was designed to be perfectly logical, but the design didn’t account for the real humans who would be operating it.

Norman recommends getting rid of the phrase “human error” altogether. Instead, we should think of interactions between person and machine the same way we think of interactions between people. When disagreements pop up, each person can clarify their intentions, propose solutions, and move on. The ideal system allows the user and the object to interact in the same way.

For example, some digital calendars allow you to enter dates with natural language. Instead of requiring dates to be entered in a single format, the user can type “August 3rd," “8/3," or “next Tuesday” and the event will be added to the proper date. The machine recognizes that humans sometimes phrase things differently, and is programmed to expect and accommodate that.

(Shortform note: We’ll explore “human error” in much more depth in Chapter 5.)

Designing for Imperfect Humans

If “human errors” are really “system errors," then designers are ultimately responsible for preventing those errors whenever possible. The goal is not to design a perfect system, but rather a system that anticipates inevitable errors, provides helpful feedback to correct those errors, and has no ‘slippery slopes’ where one small mistake can set off a catastrophic chain reaction. Here are some specific recommendations for designers to put this into practice.

- Positive psychology: See user “errors” as feedback for where the design needs improvement. Instead of traditional error messages, provide feedback on the problem that allows the user to fix it immediately, without starting over or losing progress on the parts they’ve done correctly.

- Root cause analysis: When users struggle, continue asking “why” until you find the underlying problem. This fixes both the initial problem and any downstream problems that may stem from it.

Think back to the seven stages of design, and the questions posed by each stage. As a reminder, here are the seven stages and seven questions:

- Goal: What result do I want to achieve?

- Plan: What options do I have for achieving my goal?

- Specify: Which of these options will I choose?

- Perform: How do I execute my plan?

- Perceive: What happened when I did that?

- Interpret: What does that result mean?

- Compare: Did I reach my goal?

Users should be able to answer each of these questions easily. Designers can use the following tools to provide these answers:

- Discoverability: Users can easily figure out how to use the device, even without background knowledge.

- Feedback: The device gives immediate, meaningful information in response to user commands. It also gives baseline information (whether power is on, if maintenance is needed, etc).

- Feedback should happen within 0.1 seconds of an action. Any longer and users may not automatically connect the feedback to their action.

- Conceptual model: The way the device works is easy to understand, even when important affordances are hidden.

- Affordances: The device performs the functions users need. This must be true for all potential users, not just the average user.

- Signifiers: Where affordances are not obvious, the device draws the user’s attention to them with signs, visible hardware, beeping, etc.

- Mappings: Controls for the device are laid out in a logical way that gives clues as to which controls go with which functions.

- Constraints: The device’s affordances are limited by physical and cultural constraints that help users narrow down potential functions. (Constraints are covered more thoroughly in Chapter 3.)

Exercise: Do a Root Cause Analysis

Trace a small behavior backwards to find the big picture goal.

Imagine you’re in a library. You overhear someone ask the librarian where to find the science section. What is their immediate goal? What bigger goals might be driving that immediate goal? (Remember, the easiest way to determine this is to keep asking “why?” about each goal!)

Without more information, determining the root cause of a behavior can be subjective. Try this process again, imagining the same library scenario, but with a different chain of goals driving the behavior of asking for the science section.

Did thinking through a root cause analysis in the above questions come naturally to you? Is this tool something you use in everyday life? In what areas of your life would this tool be useful?

Chapter 3.1: The Mechanics of Memory

In this chapter, we’ll explore the interaction between “knowledge in the head” (memory) and “knowledge in the world” (design features). A significant chunk of the chapter is dedicated to an overview of different types of memory and how they function. This section isn’t overly technical, and as we’ll see, having a basic understanding of memory has important implications for design.

“Knowledge in the Head” vs. “Knowledge in the World”

Norman refers to any information stored solely in memory as “knowledge in the head." This applies to things like passwords on your computer (unless you’ve written them down) as well as knowing how to use a computer in the first place. Knowledge in the head can be either declarative or procedural.