1-Page Summary

Thinking, Fast and Slow concerns a few major questions: how do we make decisions? And in what ways do we make decisions poorly?

The book covers three areas of Daniel Kahneman’s research: cognitive biases, prospect theory, and happiness.

System 1 and 2

Kahneman defines two systems of the mind.

System 1: operates automatically and quickly, with little or no effort, and no sense of voluntary control

- Examples: Detect that one object is farther than another; detect sadness in a voice; read words on billboards; understand simple sentences; drive a car on an empty road.

System 2: allocates attention to the effortful mental activities that demand it, including complex computations. Often associated with the subjective experience of agency, choice and concentration

- Examples: Focus attention on a particular person in a crowd; exercise faster than is normal for you; monitor your behavior in a social situation; park in a narrow space; multiply 17 x 24.

System 1 automatically generates suggestions, feelings, and intuitions for System 2. If endorsed by System 2, intuitions turn into beliefs, and impulses turn into voluntary actions.

System 1 can be completely involuntary. You can’t stop your brain from completing 2 + 2 = ?, or from considering a cheesecake as delicious. You can’t unsee optical illusions, even if you rationally know what’s going on.

A lazy System 2 accepts what the faulty System 1 gives it, without questioning. This leads to cognitive biases. Even worse, cognitive strain taxes System 2, making it more willing to accept System 1. Therefore, we’re more vulnerable to cognitive biases when we’re stressed.

Because System 1 operates automatically and can’t be turned off, biases are difficult to prevent. Yet it’s also not wise (or energetically possible) to constantly question System 1, and System 2 is too slow to substitute in routine decisions. We should aim for a compromise: recognize situations when we’re vulnerable to mistakes, and avoid large mistakes when the stakes are high.

Cognitive Biases and Heuristics

Despite all the complexities of life, notice that you’re rarely stumped. You rarely face situations as mentally taxing as having to solve 9382 x 7491 in your head.

Isn’t it profound how we can make decisions without realizing it? You like or dislike people before you know much about them; you feel a company will succeed or fail without really analyzing it.

When faced with a difficult question, System 1 substitutes an easier question, or the heuristic question. The answer is often adequate, though imperfect.

Consider the following examples of heuristics:

- Target question: Is this company’s stock worth buying? Will the price increase or decrease?

- Heuristic question: How much do I like this company?

- Target question: How happy are you with your life?

- Heuristic question: What’s my current mood?

- Target question: How far will this political candidate get in her party?

- Heuristic question: Does this person look like a political winner?

These are related, but imperfect questions. When System 1 produces an imperfect answer, System 2 has the opportunity to reject this answer, but a lazy System 2 often endorses the heuristic without much scrutiny.

Important Biases and Heuristics

Confirmation bias: We tend to find and interpret information in a way that confirms our prior beliefs. We selectively pay attention to data that fit our prior beliefs and discard data that don’t.

“What you see is all there is”: We don’t consider the global set of alternatives or data. We don’t realize the data that are missing. Related:

- Planning fallacy: we habitually underestimate the amount of time a project will take. This is because we ignore the many ways things could go wrong and visualize an ideal world where nothing goes wrong.

- Sunk cost fallacy: we separate life into separate accounts, instead of considering the global account. For example, if you narrowly focus on a single failed project, you feel reluctant to cut your losses, but a broader view would show that you should cut your losses and put your resources elsewhere.

Ignoring reversion to the mean: If randomness is a major factor in outcomes, high performers today will suffer and low performers will improve, for no meaningful reason. Yet pundits will create superficial causal relationships to explain these random fluctuations in success and failure, observing that high performers buckled under the spotlight, or that low performers lit a fire of motivation.

Anchoring: When shown an initial piece of information, you bias toward that information, even if it’s irrelevant to the decision at hand. For instance, in one study, when a nonprofit requested $400, the average donation was $143; when it requested $5, the average donation was $20. The first piece of information (in this case, the suggested donation) influences our decision (in this case, how much to donate), even though the suggested amount shouldn’t be relevant to deciding how much to give.

Representativeness: You tend to use your stereotypes to make decisions, even when they contradict common sense statistics. For example, if you’re told about someone who is meek and keeps to himself, you’d guess the person is more likely to be a librarian than a construction worker, even though there are far more of the latter than the former in the country.

Availability bias: Vivid images and stronger emotions make items easier to recall and are overweighted. Meanwhile, important issues that do not evoke strong emotions and are not easily recalled are diminished in importance.

Narrative fallacy: We seek to explain events with coherent stories, even though the event may have occurred due to randomness. Because the stories sound plausible to us, it gives us unjustified confidence about predicting the future.

Prospect Theory

Traditional Expected Utility Theory

Traditional “expected utility theory” asserts that people are rational agents that calculate the utility of each situation and make the optimum choice each time.

If you preferred apples to bananas, would you rather have a 10% chance of winning an apple, or 10% chance of winning a banana? Clearly you’d prefer the former.

The expected utility theory explained cases like these, but failed to explain the phenomenon of risk aversion, where in some situations a lower-expected-value choice was preferred.

Consider: Would you rather have an 80% chance of gaining $100 and a 20% chance to win $10, or a certain gain of $80?

The expected value of the former is greater (at $82) but most people choose the latter. This makes no sense in classic utility theory—you should be willing to take a positive expected value gamble every time.

Furthermore, it ignores how differently we feel in the case of gains and losses. Say Anthony has $1 million and Beth has $4 million. Anthony gains $1 million and Beth loses $2 million, so they each now have $2 million. Are Anthony and Beth equally happy?

Obviously not - Beth lost, while Anthony gained. Puzzling with this concept led Kahneman to develop prospect theory.

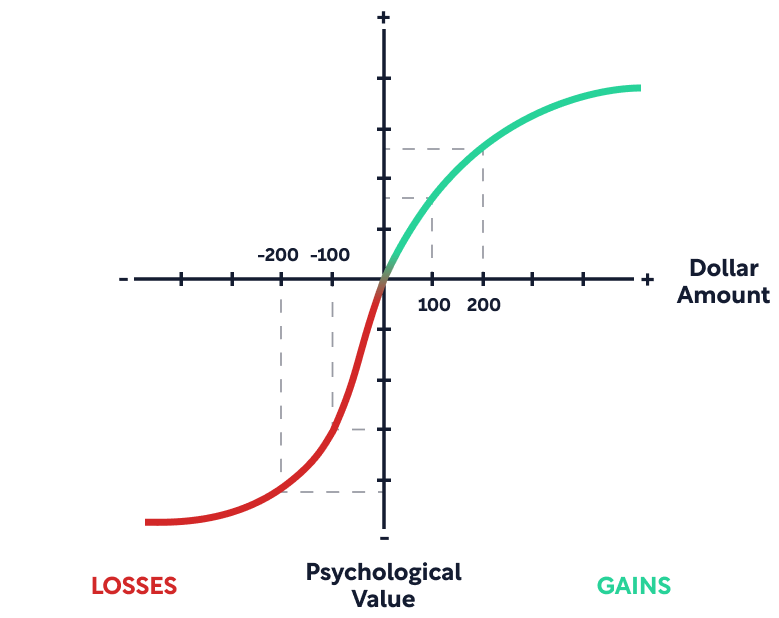

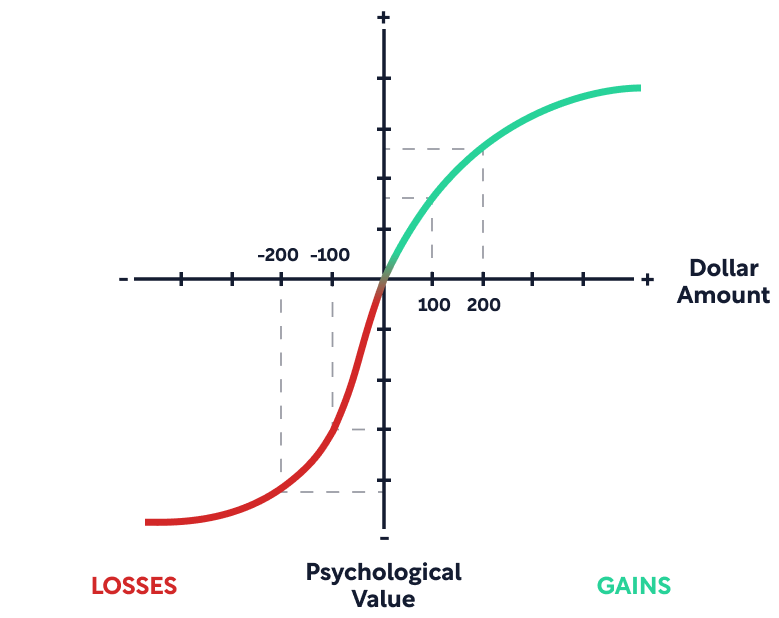

Prospect Theory

The key insight from the above example is that evaluations of utility are not purely dependent on the current state. Utility depends on changes from one’s reference point. Utility is attached to changes of wealth, not states of wealth. And losses hurt more than gains.

Prospect theory can be summarized in 3 points:

1. When you evaluate a situation, you compare it to a neutral reference point.

- Usually this refers to the status quo you currently experience. But it can also refer to an outcome you expect or feel entitled to, like an annual raise. When you don’t get something you expect, you feel crushed, even though your status quo hasn’t changed.

2. Diminishing marginal utility applies to changes in wealth (and to sensory inputs).

- Going from $100 to $200 feels much better than going from $900 to $1,000. The more you have, the less significant the change feels.

3. Losses of a certain amount trigger stronger emotions than a gain of the same amount.

- Evolutionarily, the organisms that treated threats more urgently than opportunities tended to survive and reproduce better. We have evolved to react extremely quickly to bad news.

There are a few practical implications of prospect theory.

Possibility Effect

Consider which is more meaningful to you:

- Going from a 0% chance of winning $1 million to 5% chance

- Going from a 5% chance of winning $1 million to 10% chance

Most likely you felt better about the first than the second. The mere possibility of winning something (that may still be highly unlikely) is overweighted in its importance. We fantasize about small chances of big gains. We obsess about tiny chances of very bad outcomes.

Certainty Effect

Now consider how you feel about these options on the opposite end of probability:

- In a surgical procedure, going from a 90% success rate to 95% success rate.

- In a surgical procedure, going from a 95% success rate to 100% success rate

Most likely, you felt better about the second than the first. Outcomes that are almost certain are given less weight than their probability justifies. 95% success rate is actually fantastic! But it doesn’t feel this way, because it’s not 100%.

Status Quo Bias

You like what you have and don’t want to lose it, even if your past self would have been indifferent about having it. For example, if your boss announces a raise, then ten minutes later said she made a mistake and takes it back, this is experienced as a dramatic loss. However, if you heard about this happening to someone else, you likely would see the change as negligible.

Framing Effects

The context in which a decision is made makes a big difference in the emotions that are invoked and the ultimate decision. Even though a gain can be logically equivalently defined as a loss, because losses are so much more painful, different framings may feel very different.

For example, a medical procedure with a 90% chance of survival sounds more appealing than one with a 10% chance of mortality, even though they’re identical.

Happiness and the Two Selves

The new focus of Kahneman’s recent research is happiness. Happiness is a tricky concept. There is in-the-moment happiness, and there is overall well being. There is happiness we experience, and happiness we remember.

Kahneman presents two selves:

- The experiencing self: the person who feels pleasure and pain, moment to moment. This experienced utility would best be assessed by measuring happiness over time, then summing the total happiness felt over time. (In calculus terms, this is integrating the area under the curve.)

- The remembering self: the person who reflects on past experiences and evaluates it overall.

The remembering self factors heavily in our thinking. After a moment has passed, only the remembering self exists when thinking about our past lives. The remembering self is often the one making future decisions.

But the remembering self evaluates differently from the experiencing self in two critical ways:

- Peak-end rule: The overall rating is determined by the peak intensity of the experience and the end of the experience. It does not care much about the averages throughout the experience.

- Duration neglect: The duration of the experience has little effect on the memory of the event.

We tend to prioritize the remembering self (such as when we choose where we book vacations, or in our willingness to endure pain we will forget later) and don’t give enough to the experiencing self.

For example, would you take a vacation that was very enjoyable, but required that at the end you take a pill that gives you total amnesia of the event? Most would decline, suggesting that memories are a key, perhaps dominant, part of the value of vacations. The remembering self, not the experiencing self, chooses vacations!

Kahneman’s push is to weight the experiencing self more. Spend more time on things that give you moment-to-moment pleasures, and diminish moment-to-moment pain. Try to reduce your commute, which is a common source of experienced misery. Spend more time in active pleasure activities, such as socializing and exercise.

Focusing Illusion

Considering overall life satisfaction is a difficult System 2 question. When considering life satisfaction, it’s difficult to consider all the factors in your life, weigh those factors accurately, then score your factors.

As is typical, System 1 substitutes the answer to an easier question, such as “what is my mood right now?”, focusing on significant events (both achievements and failures), or recurrent concerns (like illness).

The key point: Nothing in life is as important as you think it is when you are thinking about it. Your mood is largely determined by what you attend to. You get pleasure/displeasure from something when you think about it.

For example, even though Northerners despise their weather and Californians enjoy theirs, in research studies, climate makes no difference in life satisfaction. Why is this? When people are asked about life satisfaction, climate is just a small factor in the overall question—they’re much more worried about their career, their love life, and the bills they need to pay.

When you forecast your own future happiness, you overestimate the effect a change will have on you (like getting a promotion), because you overestimate how salient the thought will be in future you’s mind. In reality, future you has gotten used to the new environment and now has other problems to worry about.

Part 1-1: Two Systems of Thinking

We believe we’re being rational most of the time, but really much of our thinking is automatic, done subconsciously by instinct. Most impressions arise without your knowing how they got there. Can you pinpoint exactly how you knew a man was angry from his facial expression, or how you could tell that one object was farther away than another, or why you laughed at a funny joke?

This becomes more practically important for the decisions we make. Often, we’ve decided what we’re going to do before we even realize it. Only after this subconscious decision does our rational mind try to justify it.

The brain does this to save on effort, substituting easier questions for harder questions. Instead of thinking, “should I invest in Tesla stock? Is it priced correctly?” you might instead think, “do I like Tesla cars?” The insidious part is, you often don’t notice the substitution. This type of substitution produces systematic errors, also called biases. We are blind to our blindness.

System 1 and System 2 Thinking

In Thinking, Fast and Slow, Kahneman defines two systems of the mind:

System 1: operates automatically and quickly, with little or no effort, and no sense of voluntary control

- Examples: Detect that one object is farther than another; detect sadness in a voice; read words on billboards; understand simple sentences; drive a car on an empty road.

System 2: allocates attention to the effortful mental activities that demand it, including complex computations. Often associated with the subjective experience of agency, choice and concentration

- Examples: Focus attention on a particular person in a crowd; exercise faster than is normal for you; monitor your behavior in a social situation; park in a narrow space; multiply 17 x 24.

Properties of System 1 and 2 Thinking

System 1 can be completely involuntary. You can’t stop your brain from completing 2 + 2 = ?, or from considering a cheesecake as delicious. You can’t unsee optical illusions, even if you rationally know what’s going on.

System 1 can arise from expert intuition, trained over many hours of learning. In this way a chess master can recognize a strong move within a second, where it would take a novice several minutes of System 2 thinking.

System 2 requires attention and is disrupted when attention is drawn away. More on this next.

System 1 automatically generates suggestions, feelings, and intuitions for System 2. If endorsed by System 2, intuitions turn into beliefs, and impulses turn into voluntary actions.

System 1 can detect errors and recruits System 2 for additional firepower.

- Kahneman tells a story of a veteran firefighter who entered a burning house with his crew, felt something was wrong, and called for them to get out. The house collapsed shortly after. He only later realized that his ears were unusually hot but the fire was unusually quiet, indicating the fire was in the basement.

Because System 1 operates automatically and can’t be turned off, biases are difficult to prevent. Yet it’s also not wise (or energetically possible) to constantly question System 1, and System 2 is too slow to substitute in routine decisions. “The best we can do is a compromise: learn to recognize situations in which mistakes are likely and try harder to avoid significant mistakes when the stakes are high.”

In summary, most of what you consciously think and do originates in System 1, but System 2 takes over when the situation gets difficult. System 1 normally has the last word.

A Lazy System 2 Accepts the Errors of System 1

Consider these questions, and go through them quickly, trusting your intuition.

1) A bat and ball cost $1.10. The bat costs one dollar more than the ball. How much does the ball cost?

2) How many murders happen in Michigan each year?

3) Does the conclusion follow from the premises?

Ready to see the answers?

1) The answer is $0.05. The common intuitive (and wrong) answer is $0.10.

2) The trick is whether you remember that Detroit is in Michigan. People who remember this estimate a number that is much higher (and more accurate) than those who forget.

3) The answer is no—all roses may not fit into the subcategory of flowers that fade quickly.

===

All of your answers, if you really spent time on it, could be verified by deliberate System 2 thinking. In the first question, it’s easy to see that if the ball cost $0.10, the total would be $1.20, which is clearly incompatible with the question. For the second question, if you had to enumerate the major cities of Michigan, you would likely list Detroit.

For some people, spending enough time would be sufficient to get the answers right. But many people, even if given unlimited time, might not even think to apply their System 2 to question their answers and find different approaches to the question. Over 50% of students at Harvard and MIT gave the wrong answer to the bat-and-ball question; over 80% at less selective universities.

This is the insidious problem of a “lazy System 2.” System 1 surfaces the intuitive answer for System 2 to evaluate. But a lazy System 2 doesn’t properly do its job - it accepts what System 1 offers without expending the small investment of effort that could have rejected the wrong answer.

Even worse, this aggravates confirmation bias. A piece of information that fits your prior beliefs might evoke a positive System 1 feeling, while your System 2 might never pause to evaluate the validity of the piece of information. If you believe a conclusion is true, you might believe arguments that support it, even when the arguments are unsound.

It’s useful then to distinguish between intelligence and rationality.

- Intelligence might be considered the full computational horsepower of a person’s brain.

- Rationality is resistance to mental laziness; not accepting a superficially plausible answer; being more skeptical of intuitions; tending to put in the hard work of checking the logic; and thus immunity to biases.

In other words, a powerful system 2 is useless if the person doesn’t recognize the need to override their system 1 response.

The theme here, that will recur through the book, is that people are overconfident and place too much faith in their intuitions. Further, they find cognitive effort unpleasant and avoid it as much as possible.

Part 1-2: System 2 Has a Maximum Capacity

System 2 thinking has a limited budget of attention - you can only do so many cognitively difficult things at once.

This limitation is true when doing two tasks at the same time - if you’re navigating traffic on a busy highway, it becomes far harder to solve a multiplication problem.

This limitation is also true when one task comes after another - depleting System 2 resources earlier in the day can lower inhibitions later. For example, a hard day at work will make you more susceptible to impulsive buying from late-night infomercials. This is also known as “ego depletion,” or the idea that you have a limited pool of willpower or mental resources that can be depleted each day.

All forms of voluntary effort - cognitive, emotional, physical - seem to draw at least partly on a shared pool of mental energy.

- Stifling emotions during a sad film worsens physical stamina later.

- Memorizing a list of seven digits makes subjects more likely to yield to more decadent desserts.

Differences in Demanding Tasks

The law of least effort states that “if there are several ways of achieving the same goal, people will eventually gravitate to the least demanding course of action.”

What makes some cognitive operations more demanding than others? Here are a few examples:

- Holding in memory several ideas that require separate actions (like memorizing a supermarket shopping list). System 1 is not capable of dealing with multiple distinct topics at once.

- Obeying an instruction that overrides habitual responses, thus requiring “executive control.”

- This covers cognitive, emotional, and physical impulses of all kinds.

- Switching between tasks.

- Time pressure.

In the lab, the strain of a cognitive task can be measured by pupil size - the harder the task, the more the pupil dilates, in real time. Heart rate also increases.

Kahneman cites one particular task as the limit of what most people can do in the lab, dilating the pupil by 50% and increasing heart rate by 7bpm. The task is “Add-3”:

- Write several 4 digit numbers on separate index cards.

- To the beat of a metronome, read the four digits aloud.

- Wait for two beats, then report a string in which each of the original digits is incremented by 3 (eg 4829 goes to 7152).

If you make the task any harder than this, most people give up. Mentally speaking, this is sprinting as hard as you can, whereas casual conversation is a leisurely stroll.

Because System 2 has limited resources, stressful situations make it harder to think clearly. Stressful situations may be caused by:

- Physical exertion: In intense exercise, you need to apply mental resistance to the urge to slow down. Even a physical activity as relaxed as taking a stroll involves the use of mental resources. Therefore, the most complex arguments might be done while sitting.

- The presence of distractions.

- Exercising self-control or willpower in general.

Because of the fixed capacity, you cannot will yourself to think harder in the moment and surpass the “Add-3” limit, even with a gun to your head. In the same way, you cannot sprint any faster than you can possibly sprint.

How to Make Difficult Tasks Easier

But there are some ways to make a mentally demanding task easier:

- Get more skilled. The more skilled you get at a task, the less energy it consumes. Thus experts can solve situations that require far more intense work for novices (such as diagnosing a disease for doctors, or making a chess move for a grandmaster).

- In a state of flow, you can concentrate energy without having to exert willpower, thus freeing your entire mental faculty to the task. Flow has been described as one of the most enjoyable states of life.

- (Shortform note: flow theory suggests three conditions have to be met to get into flow: 1) Clear set of goals and progress. 2) Clear and immediate feedback, to adjust your performance to maintain flow. 3) Good balance between perceived challenges and perceived skills - a confidence in your ability to complete the task.)

- Get incentives for your behavior. When given proper incentives, people resisted ego depletion.

- Eat. Consuming more glucose seems to undo ego depletion, since cognitive effort depletes blood glucose.

- A well-known study of Iraeli judges showed that, after meals, the judges were more lenient about awarding parole to prisoners.

- Playing computer games requiring attention and control may improve executive control and intelligence scores.

As we’ll find later, when System 2 is taxed, it has less firepower to question the conclusions of System 1.

Cognitive Ease

Cognitive ease is an internal measure of how easy or strained your cognitive load is.

In a state of cognitive ease, you’re probably in a good mood, believe what you hear, trust your intuitions, feel the situation is familiar, are more creative, and are superficial in your thinking. System 1 is humming along, and System 2 accepts what System 1 says.

In a state of cognitive strain, you’re more vigilant and suspicious, feel less comfortable, invest more effort, and trigger System 2. You make fewer errors, but you’re also less intuitive and less creative.

Cognitive ease increases with certain inputs or characteristics of the task, including:

- Repeated experience

- Clear display

- Primed idea

- Good mood

You don’t consciously know exactly what it is that makes it easy or strained. Rather, the ease of the task gets compressed into a single “is this easy” factor that then determines the level of mental strain you need to apply.

Drilling down into each input:

Repeated Experience / Mere Exposure / Familiarity

Exposing someone to an input repeatedly makes them like it more. Having a memory of a word, phrase, or idea makes it easier to see again.

Example experiments:

- People are exposed to a set of made-up names. A few days later, they are given a list of names and asked to point out which names belong to celebrities. The subjects are more likely to falsely identify the random names as those of celebrities if they were previously exposed to them.

- People are exposed to a set of Turkish words at different frequencies. When they are later asked to rate a set of words on a good-bad scale, the subjects perceive the more frequently shown words as more positive.

Even a single occurrence can make an idea familiar.

- Say a car lights on fire a block away from your house. The first time, it’s shocking. The second time you see it, it is far less surprising than the first time it happened.

If the new idea fits your existing mental framework, you will digest it more easily.

Evolutionarily, this benefits the organism by saving cognitive load. If a stimulus has occurred in the past and hasn’t caused danger, later occurrences of that stimulus can be discarded. This saves cognitive energy for new surprising stimuli that might indicate danger.

However, this can cause a potentially dangerous bias. You will tend to like the ideas you are exposed to most often, regardless of the merit of those ideas.

You cannot easily distinguish familiarity from the truth. If you think an idea is true, is it only because it’s been repeated so often, or is it actually true? (Shortform note: thus, be aware of “common sense” intuitions that seem true merely because they’re repeated often, like “searing meat seals in the juices.”)

Furthermore, if you trust a source, you are more likely to believe what is said.

Clear Display

You can use the idea of cognitive ease to convince people to believe in the truth of something you’ve written. In general, to be more persuasive, make the message as easy to digest as possible. In other words, ease your listener’s cognitive load.

Consider the two statements:

- Adolf Hitler was born in 1891.

- Adolf Hitler was born in 1887.

Which one do you think is correct?

You likely found the first one a bit more believable, because it stood out. In reality, Hitler was born in 1889.

Here are tips to making your message more persuasive:

- Increase the contrast and make it easier to read. Use high-quality paper.

- If colored, use vivid colors of blue or red rather than pale neutral colors.

- Use simpler language. Pretentious language is interpreted as a sign of poor intelligence and low credibility.

- Make your ideas rhyme and put them in verse if you can.

- “Woes unite foes.” is interpreted as more meaningful as “Woes unite enemies.”

- When citing a source, choose one with an easier name to pronounce.

(Shortform note: In the O.J. Simpson trial, both mere exposure and clear display were used in catchphrases like “if the glove doesn’t fit, you must acquit.”)

In contrast, making cognition difficult actually activates System 2.

- For the $1.10 bat and ball problem you saw in the last chapter, making print smaller and difficult to read increased the accuracy of responses significantly.

Emotions

Cognitive ease is associated with good feelings. In contrast, cognitive strain tends to promote bad feelings.

- Stocks with phonetically reasonable symbols (like KAR) outperform non-words like PXG or RDO. This is likely because symbols you can pronounce lessen your cognitive load. This leads to more positive associations with the stock.

The causality also works in the opposite direction: your emotional state affects your thinking.

- In the three-word creative association test (in Part 1-3, below), people instructed to think about sad memories were half as accurate in their intuition as those who thought about happy memories.

- When we are uncomfortable and unhappy, we lose touch with our intuition. (But we are also more engaged with our System 2).

You Can’t Always Tell Why Something is Easy

Again, cognitive ease is a summary feeling that takes in multiple inputs and squishes them together to form a general impression. When you feel cognitively at ease, you’re not always aware oft why - it might be that the idea is actually sound and fits your correct view of the world, or that it’s simply printed with high contrast and has a nice rhyme.

Cognitive ease is associated with a “pleasant feeling of truth.” But things that seem intuitively true may actually be false on inspection.

The Link Between System 2 Capacity and Intelligence

Given that self-control and cognitive tasks draw from the same pool of energy, is there a relationship between self-control and intelligence?

Walter Mischel’s famous marshmallow experiment showed that children who better endured delayed gratification showed significantly better life outcomes, measured by SAT scores, education attainment, and body mass index.

Inversely, those who score lower on the Cognitive Reflection Test show more impulsive behavior, such as being more willing to pay for overnight delivery, or being less willing to wait for more time to receive more money. Poor scorers also show a greater tendency to fall prey to fallacies like the gambler’s fallacy (the assumption that if something occurs more frequently than usual now, it will happen less frequently than usual in the future) and sunk cost fallacy (discussed in Part 4-4).

As with most things in life, it appears that executive control has been attributed to both genetics and environment (parenting techniques).

Part 1-3: System 1 is Associative

Think of your brain as a vast network of ideas connected to each other. These ideas can be concrete or abstract. The ideas can involve memories, emotions, and physical sensations.

When one node in the network is activated, say by seeing a word or image, it automatically activates its surrounding nodes, rippling outward like a pebble thrown in water.

As an example, consider the following two words:

“Bananas Vomit”

Suddenly, within a second, reading those two words may have triggered a host of different ideas. You might have pictured yellow fruits; felt a physiological aversion in the pit of your stomach; remembered the last time you vomited; thought about other diseases - all done automatically without your conscious control.

The evocations can be self-reinforcing - a word evokes memories, which evoke emotions, which evoke facial expressions, which evoke other reactions, and which reinforce other ideas.

Links between ideas consist of several forms:

- Cause → Effect

- Belonging to the Same Category (lemon → fruit)

- Things to their properties (lemon → yellow, sour)

Association is Fast and Subconscious

In the next exercise, you’ll be shown three words. Think of a new word that fits with each of the three words in a phrase.

Here are the three words:

cottage Swiss cake

Ready?

A common answer is “cheese.” Cottage cheese, Swiss cheese, and cheesecake. You might have thought of this quickly, without really needing to engage your brain deeply.

The next exercise is a little different. You’ll be given two sets of three words. Within seconds, decide which one feels better, without defining the new word:

sleep mail switch

salt deep foam

Ready?

You might have found that the second one felt better. Isn’t that odd? There is a very faint signal from the associative machine of System 1 that says “these three words seem to connect better than the other three.” This occurred long before you consciously found the word (which is sea).

Association with Context

For another example, consider the sentence “Ana approached the bank.”

You automatically pictured a lot of things. The bank as a financial institution, Ana walking toward it.

Now let’s add a sentence to the front: “The group canoed down the river. Ana approached the bank.”

This context changes your interpretation automatically. Now you can see how automatic your first reading of the sentence was, and how little you questioned the meaning of the word “bank.”

Associations Evaluate Surprise

The purpose of associations is to prepare you for events that have become more likely, and to evaluate how surprising the event is.

The more external inputs associate with each other, and the more they associate with your internal mind, the less surprising an event is, the more System 1 acts by intuition, and the harder it is to detect errors.

Consider this sentence: “how many animals of each kind did Moses take into the ark?”

The correct answer is none - it was Noah who took animals into the ark. But the idea of animals, Moses, and the ark all set up a biblical context that associated together. Moses was not a surprising name in this context.

However, say “how many animals of each kind did Kanye West take into the ark?” and the illusion falls apart. Kanye West is not congruent with the mention of animals and ark, and so the name evokes surprise, thus calling in System 2 to help.

Normative

System 1 maintains a model of your world by determining what is normal and not.

Violations of normality can be detected extremely quickly, within fractions of a second. If you hear someone with an upper-class English accent say, “I have a large tattoo on my rear end,” your brain spikes in activity within 0.2 seconds. This is surprisingly fast, given the large amount of world knowledge that needs to be invoked to recognize the discrepancy (that rich people don’t typically get tattoos).

We also communicate by norms and shared knowledge. When I mention a table, you know it’s a solid object with a level surface and fewer than 25 legs. It’s your System-1 brain that makes this immediate, unconscious association.

(Shortform note: This also explains why many moral arguments are based around semantics. In different communities, people will have different conceptions of what the same word means, like “life” in the abortion debate. The norms are entirely different, but often people don’t realize this.)

Building a Narrative / Seeing Causes and Intentions

The System-1 brain wants to make sense of the world. It wants large events to cause effects, and it wants effects to have causes. It tries to bring coherence to a set of data points and sees interpretations that may not be explicitly mentioned.

For example: “After spending a day exploring sites in the crowded streets of New York, Jane discovered that her wallet was missing.”

Immediately, you likely pictured a pickpocket. If you were asked about this sentence later, you would likely recall the theft, even if it wasn’t stated in the text.

Once you receive a surprising data point, you also interpret new data to fit the narrative.

Imagine you’re observing a restaurant, and a man tastes a soup and suddenly yelps. This is surprising. Now two things can happen that will change your interpretation of the event:

- The server touches the man’s shoulder and he yelps.

- A woman at a different table drinks her soup, and she also yelps.

In the first case, you’ll think the man is hyper-reactive. In the second case, there’s something wrong with the soup. In both cases, System 1 assigns cause and effect without any conscious thought.

The Flaws of Associative Thinking

All of this automatic associative thinking works much of the time, but it fails when you apply causal thinking to situations that require statistical thinking. We’ll cover many more of these biases throughout this summary.

The converse is also true. Complexity is mentally taxing. Maintaining multiple incompatible explanations requires mental effort and System 2. In contrast, clear cause and effect, and easy associative relationships, are much less taxing on the brain. It’s easier to see the world in black and white than in shades of gray.

Priming and Associations

Shortform warning: This chapter of Thinking, Fast and Slow cites the highly controversial literature on priming, which has failed to replicate in follow-up studies and has been accused of p-hacking or publishing only positive results.

Kahneman admitted: “I placed too much faith in underpowered studies...The experimental evidence for the ideas I presented in that chapter was significantly weaker than I believed when I wrote it.” And the “size of behavioral priming effects...cannot be as large and as robust as my chapter suggested.”

The concept of priming took association beyond mere thought, to the functional level of ideomotor activation. When an idea is triggered, its associations can cause you to behave in a meaningfully different way without your consciously realizing it.

Examples from research studies include:

- People who were primed with money (by images or words) acted more selfishly and independently in group activities.

- Voters who submitted ballots at a school location were more likely to vote in favor of educational propositions.

- People who were primed with words related to the elderly (“Florida, forgetful, wrinkle”) walked more slowly.

- A cafeteria had a “by your honor” collection jar for shared milk. An image that showed human eyes looking at the person gathered more donations than pictures of flowers.

In the reverse direction, behaving in a certain way can trigger ideas and emotions:

- People were instructed to listen to messages through headphones and were instructed to move their heads to check for distortions of sound. Those instructed to nod up and down were more likely to agree with the messages than those shaking heads side to side.

- People who were instructed to think of a shameful thought were more likely to complete the word SO_P as SOAP rather than SOUP. The thesis: feeling morally dirty triggers the desire to cleanse: the “Lady Macbeth Effect.”

The implications of priming are profound - if we are surrounded everyday by deliberately constructed images, how can that not affect our behavior?

- Being surrounded by images of money and consumption make us more selfish.

- Seeing images of filial respect makes us nicer to our parents.

- Seeing propaganda of an autocratic leader makes you feel watched and reduces spontaneous thought and independent action.

And likewise, if we are required to behave in a certain way (in a workplace, in a social community, as citizens), does that not affect our cognition and beliefs?

The effects may not be huge - being surrounded by images of money doesn’t make you violate the law or put yourself in physical harm to get money. But a few percentage points by swinging marginal voters can make a difference in elections.

Shortform warning: Many of the studies cited were later found to have insufficient power, such that either the studies were being p-hacked, or only cherry-picked positive results were being published. It appears the field was too eager to jump on evidence that fit their view of the world.

Note the irony about being biased about biases. When priming came out, the field of psychology/behavioral economics had just undergone a paradigm change of humans being subject to systematic biases. The field hungered for confirming evidence itself, becoming too ready to accept a neat story (priming) without employing its System 2 thinking to question whether the evidence was valid!

Kahneman notes that he’s still a believer in the idea of priming, “There is adequate evidence for all the building blocks: semantic priming, significant processing of stimuli that are not consciously perceived, and ideo-motor activation....I am still attached to every study that I cited, and have not unbelieved them, to use Daniel Gilbert’s phrase.”

Part 1-4: How We Make Judgments

System 1 continuously monitors what’s going on outside and inside the mind and generates assessments with little effort and without intention. The basic assessments include language, facial recognition, social hierarchy, similarity, causality, associations, and exemplars.

- In this way, you can look at a male face and consider him competent (for instance, if he has a strong chin and a slight confident smile).

- The survival purpose is to monitor surroundings for threats.

However, not every attribute of the situation is measured. System 1 is much better at determining comparisons between things and the average of things, not the sum of things. Here’s an example:

In the below picture, try to quickly determine what the average length of the lines is.

Now try to determine the sum of the length of the lines. This is less intuitive and requires System 2.

Unlike System 2 thinking, these basic assessments of System 1 are not impaired when the observer is cognitively busy.

In addition to basic assessments: System 1 also has two other characteristics:

1) Translating Values Across Dimensions, or Intensity Matching

System 1 is good at comparing values on two entirely different scales. Here’s an example.

Consider a minor league baseball player. Compared to the rest of the population, how athletic is this player?

Now compare your judgment to a different scale: If you had to convert how athletic the player is into a year-round weather temperature, what temperature would you choose?

Just as a minor league player is above average but not the top tier, the temperature you chose might be something like 80 Fahrenheit.

As another example, consider comparing crimes and punishments, each expressed as musical volume. If a soft-sounding crime is followed by a piercingly loud punishment, then this means a large mismatch that might indicate injustice.

2) Mental Shotgun

System 1 often carries out more computations than are needed. Kahneman calls this “mental shotgun.”

For example, consider whether each of the following three statements is literally true:

- Some roads are snakes.

- Some jobs are snakes.

- Some jobs are jails.

All three statements are literally false. The second statement likely registered more quickly as false to you, while the other two took more time to think about because they are metaphorically true. But even though finding metaphors was irrelevant to the task, you couldn’t help noticing them - and so the mental shotgun slowed you down. Your System-1 brain made more calculations than it had to.

Heuristics: Answering an Easier Question

Despite all the complexities of life, notice that you’re rarely stumped. You rarely face situations as mentally taxing as having to solve 9382 x 7491 in your head.

Isn’t it profound how we can make decisions at all without realizing it? You like or dislike people before you know much about them; you feel a company will succeed or fail without really analyzing it.

When faced with a difficult question, System 1 substitutes an easier question, or the heuristic question. The answer is often adequate, though imperfect.

Consider the following examples of heuristics:

- Target question: How much is saving an endangered species worth to me?

- Heuristic question: How much emotion do I feel when I think of dying dolphins?

- Target question: How happy are you with your life?

- Heuristic question: What’s my current mood?

- Target question: How should financial advisers who commit fraud be punished?

- Heuristic question: How much anger would I feel if I were ripped off?

- Target question: How far will this political candidate get in her party?

- Heuristic question: Does this person look like a political winner?

Based on what we learned previously about characteristics of System 1, here’s how heuristics are generated:

- System 1 applies a mental shotgun with a slew of basic assessments and heuristics about the problem. These impressions are readily available without thought.

- Many impressions are more emotion-based than we would like.

- Next, System 1 uses intensity matching to scale the relevant assessments to the target question.

- How painful the image of a dying dolphin feels is scaled to how much money you’re willing to contribute.

- Note that this doesn’t actually directly answer the question - sometimes, the heuristic is actually asking a completely different question.

When System 1 produces an imperfect answer, System 2 has the opportunity to reject this answer, but a lazy System 2 often endorses the heuristic without much scrutiny.

Even more insidiously, a lazy System 2 will feel as though it’s applied tremendous brainpower to the question.

Example: Order Matters

In one experiment, students were asked:

When presented in that order, there was no correlation between the answers.

When the questions were reversed, the correlation was very high. The first question prompted an emotional response, which was then used to answer the happiness question. Here the heuristic of “how many dates did I go on?” was used to scale to the very different question, “how happy am I?”

Part 1-5: Biases of System 1

Putting it all together, we are most vulnerable to biases when:

- System 1 forms a narrative that conveniently connects the dots and doesn’t express surprise.

- Because of the cognitive ease by System 1, System 2 is not invoked to question the data. It merely accepts the conclusions of System 1.

In day-to-day life, this is acceptable if the conclusions are likely to be correct, the costs of a mistake are acceptable, and if the jump saves time and effort. You don’t question whether to brush your teeth each day, for example.

In contrast, this shortcut in thinking is risky when the stakes are high and there’s no time to collect more information, like when serving on a jury, deciding which job applicant to hire, or how to behave in an weather emergency.

We’ll end part 1 with a collection of biases.

What You See is All There Is: WYSIATI

When presented with evidence, especially those that confirm your mental model, you do not question what evidence might be missing. System 1 seeks to build the most coherent story it can - it does not stop to examine the quality and the quantity of information.

In an experiment, three groups were given background to a legal case. Then one group was given just the plaintiff’s argument, another the defendant’s argument, and the last both arguments.

Those given only one side gave a more skewed judgment, and were more confident of their judgments than those given both sides, even though they were fully aware of the setup.

We often fail to account for critical evidence that is missing.

Halo Effect

If you think positively about something, it extends to everything else you can think about that thing.

Say you find someone visually attractive and you like this person for that reason. As a result, you are more likely to find her intelligent or capable, even if you have no evidence of this. Even further, you tend to like intelligent people, and now that you think she’s intelligent, you like her better than you did before, causing a feedback loop.

In other words, your emotional response fills in the blanks for what’s cognitively missing from your understanding.

The Halo Effect forms a simpler, more coherent story by generalizing one attribute to the entire person. Inconsistencies about a person, if you like one thing about them but dislike another, are harder to understand. “Hitler loved dogs and little children” is troubling for many to comprehend.

Ordering Effect

First impressions matter. They form the “trunk of the tree” to which later impressions are attached like branches. It takes a lot of work to reorder the impressions to form a new trunk.

Consider two people who are described as follows:

- Amos: intelligent, hard-working, strategic, suspicious, selfish

- Barry: selfish, suspicious, strategic, hard-working, intelligent

Most likely you viewed Amos as the more likable person, even though the five words used are identical, just differently ordered. The initial traits change your interpretation of the traits that appear later.

This explains a number of effects:

- Pygmalion effect: A person’s expectation of a target person affects the target person’s performance. Have higher expectations of a person, and they will tend to do better.

- In an experiment, students were randomly ordered in a report of academic performance. This report was then given to teachers. Students who were randomly rated as more competent ended the year with better academic scores, even though they started the school year with no average difference.

- Kahneman previously graded exams by going through an entire student’s test before going to the next student’s. He found that the student’s first essay dramatically influenced his interpretation of later essays - an excellent first essay would earn the student benefit of the doubt on a poor second essay. A poor first essay would cast doubt on later effective essays. He subverted this by batching by essay and iterating through all students.

- Work meetings often polarize around the first and most vocal people to speak. Meetings would better yield the best ideas if people could write down opinions beforehand.

- Witnesses are not allowed to discuss events in a trial before testimony.

The antidote to the ordering effect:

- Before having a public discussion on a topic, elicit opinions from the group confidentially first. This avoids bias in favor of the first speakers.

Confirmation Bias

Confirmation bias is the tendency to find and interpret information in a way that confirms your prior beliefs.

This materializes in a few ways:

- We selectively pay attention to data that fit our prior beliefs and discard data that don’t.

- We seek out sources that tend to give us confirmatory data, and reject sources that contradict our beliefs.

- We recall information that confirms our beliefs more readily than contradictory information.

Mere Exposure Effect

Exposing someone to an input repeatedly makes them like it more. Having a memory of a word, phrase, or idea makes it easier to see again.

See the discussion in Part 1.2.

Narrative Fallacy

This is explained more in Part 2, but it deals with System 1 thinking.

People want to believe a story and will seek cause-and-effect explanations in times of uncertainty. This helps explain the following:

- Stock market movements are explained like horoscopes, where the same explanation can be used to justify both rises and drops (for instance, the capture of Saddam Hussein was used to explain both the rise and subsequent fall of bond prices).

- Most religions explain the creation of earth, of humans, and of the afterlife.

- Famous people are given origin stories - Steve Jobs reached his success because of his abandonment by his birth parents. Sports stars who lose a championship have the loss attributed to a host of reasons.

Once a story is established, it becomes difficult to overwrite. (Shortform note: this helps explain why frauds like Theranos and Enron were allowed to perpetuate - observers believed the story they wanted to hear.)

Affect Heuristic

How you like or dislike something determines your beliefs about the world.

For example, say you’re making a decision with two options. If you like one particular option, you’ll believe the benefits are better and the costs/risks more manageable than those of alternatives. The inverse is true of options you dislike.

Interestingly, if you get a new piece of information about an option’s benefits, you will also decrease your assessment of the risks, even though you haven’t gotten any new information about the risks. You just feel better about the option, which makes you downplay the risks.

Vulnerability to Bias

We’re more vulnerable to biases when System 2 is taxed.

To explain this, psychologist Daniel Gilbert has a model of how we come to believe ideas:

- System 1 constructs the best possible interpretation of the belief - if the idea were true, what does it mean?

- System 2 evaluates whether to believe the idea - “unbelieving” false ideas.

When System 2 is taxed, then it does not attack System 1’s belief with as much scrutiny. Thus, we’re more likely to accept what it says.

Experiments show that when System 2 is taxed (like when forced to hold digits in memory), you become more susceptible to false sentences. You’ll believe almost anything.

This might explain why infomercials are effective late at night. It may also explain why societies in turmoil might apply less logical thinking to persuasive arguments, such as Germany during Hitler’s rise.

Part 2: Heuristics and Biases | 1: Statistical Mistakes

Kahneman transitions to Part 2 from Part 1 by explaining more heuristics and biases we’re subject to.

The general theme of these biases: we prefer certainty over doubt. We prefer coherent stories of the world, clear causes and effects. Sustaining incompatible viewpoints at once is harder work than sliding into certainty. A message, if it is not immediately rejected as a lie, will affect our thinking, regardless of how unreliable the message is.

Furthermore, we pay more attention to the content of the story than to the reliability of the data. We prefer simpler and coherent views of the world and overlook why those views are not deserved. We overestimate causal explanations and ignore base statistical rates. Often, these intuitive predictions are too extreme, and you will put too much faith in them.

This chapter will focus on statistical mistakes - when our biases make us misinterpret statistical truths.

The Law of Small Numbers

The smaller your sample size, the more likely you are to have extreme results. When you have small sample sizes, do NOT be misled by outliers.

A facetious example: in a series of 2 coin tosses, you are likely to get 100% heads. This doesn’t mean the coin is rigged.

In this case, the statistical mistake is clear. But in more complicated scenarios, outliers can be deceptive.

Case 1: Cancer Rates in Rural Areas

A study found that certain rural counties in the South had the lowest rates of kidney cancer. What was special about these counties - something about the rigorous hard work of farming, or the free open air?

The same study then looked at the counties with the highest rates of kidney cancer. Guess what? They were also rural areas!

We can infer that the fresh air and additive-free food of a rural lifestyle explain low rates of kidney cancer; we can also infer that the poverty and high-fat diet of a rural lifestyle explain high rates of kidney cancer. But we can’t have it both ways. It doesn’t make sense to attribute both low and high cancer rates to a rural lifestyle.

If it’s not lifestyle, what’s the key factor here? Population size. The outliers in the high-cancer areas appeared merely because the populations were so small. By random chance, some rural counties would have a spike of cancer rates. Small numbers skew the results.

Case 2: Small Classrooms

The Gates Foundation studied educational outcomes in schools and found small schools were habitually at the top of the list. Inferring that something about small schools led to better outcomes, the foundation tried to apply small-school practices at large schools, including lowering the student-teacher ratio and decreasing class sizes.

These experiments failed to produce the dramatic gains they were hoping for.

Had they inverted the question - what are the characteristics of the worst schools? - they would have found these schools to be smaller than average as well.

When falling prey to the Law of Small Numbers, System 1 is finding spurious causal connections between events. It is too ready to jump to conclusions that make logical sense but are merely statistical flukes. With a surprising result, we immediately skip to understanding causality rather than questioning the result itself.

Even professional academics are bad at understanding this - they often trust the results of underpowered studies, especially when the conclusions fit their view of the world. (Shortform note: Kahneman clearly had a problem with this himself with the priming studies from Part 1!)

The name of this law comes from the facetious idea that “the law of large numbers applies to small numbers as well.”

The only way to get statistical robustness is to compute the sample size needed to convincingly demonstrate a difference of a certain magnitude. The smaller the difference, the larger the sample needed to get statistical significance on the difference.

Focusing on the Story Rather than the Reliability

Consider this result: “In a telephone poll of 300 seniors, 60% support the president.”

If you were asked to summarize this in a few words, you’d likely end with something like “old people like the president.”

You don’t react much differently if the sample were with 150 people or 3000 people. You are not adequately sensitive to sample size.

Obviously, if the figures are way off (6 seniors were asked, or 600 million were asked), System 1 detects a surprise and kicks it to System 2 to reject. (But note weaknesses in small sample size can also be easily disguised, as in the common phrasing “6 out of 10 seniors”.)

Extending this further, you don’t always discriminate between “I heard from a smart friend” and “I read in the New York Times.” As long as you don’t immediately reject the story, you tend to accept it as 100% true.

A Misunderstanding of Randomness

People tend to expect randomness to occur regularly. For coin flips yielding heads or tails, the following sequences all have equal probability:

HHHTTT

TTTTTT

HTHTTH

However, sequence 3 “looks” far more random. Sequence 1 and 2 are more likely to trigger a desire for alternative explanations. (Shortform note: the illusion also occurs because there is only one such sequence of TTTTTT, but hundreds of the type like the third that we don’t strongly distinguish between.)

Corollary: we look for patterns where none exist.

Other examples:

- In World War II, London was bombed evenly throughout except for a few conspicuous gaps. Some suspected German spies were in those areas and thus those areas were deliberately saved. In reality, the distribution of hits were typical of a random process.

- There is no such thing as a hot hand in basketball - statistical analyses show that these streaks of baskets are entirely random.

Evolutionarily, the tendency to attribute patterns to randomness might have arisen out of a margin of safety for hazardous situations. That is, if a pack of lions suddenly seems to double, you don’t try to think about whether this is just a random statistical fluctuation. You just assume there’s a cause and impending danger, and you leave.

Causal Situations

Individual cases are often overweighted relative to statistics. In other words, even when we get accurate statistics about a situation, we still tend to focus on what individual cases tell us.

This was shown to great effect when psychology students were taught about troubling experiments like the Milgram shocking experiment, where 26 of 40 ordinary participants delivered the highest voltage shock.

Students were then shown videos of two normal-seeming people. These people didn’t seem the type to voluntarily shock a stranger. The students were asked: how likely were these individuals to have delivered the highest voltage shock?

The students guessed a chance far below 26/40, the statistical rate they had just been given.

This is odd. The students hadn’t learned anything at all! They had exempted themselves from the conclusions of experiments. “Surely the people who administered the shocks were depraved in some way - I would have behaved better, and normal people like these two folks would as well.” They ignored the statistics in favor of the individual cases given to them.

The antidote to this was to reverse the order - students were told about the experimental setup, shown the videos of the two people, and only then told the outcome of how 26 out of 40 ordinary participants had delivered the maximum shock. Their estimate of the failure rate of the two individuals became much more accurate.

Reversion to the Mean

Over repeated sampling periods, outliers tend to revert to the mean. High performers show disappointing results when they fail to continue delivering; strugglers show sudden improvement.

In reality, this is just statistical fluctuation. However, as the theme of this section suggests, we tend to see patterns where there are none. We come up with cute causal explanations for why the high performers faltered, and why the strugglers improved.

Here are examples of reversion to the mean:

- A military commander has two units return, one with 20% casualties and another with 50% casualties. He praises the first and berates the second. The next time, the two units return with the opposite results. From this experience, he “learns” that praise weakens performance and berating increases performance.

- In the Small Numbers example above, if the Gates Foundation had measured the schools again in subsequent years, they’d find the top-performing small schools would have lost their top rankings.

- Many health problems tend to resolve on their own, given our bodies’ natural healing properties. However, patients attribute the healing to whatever action they took meanwhile - seeing a doctor, doing a superstitious act, taking medicine - while ignoring that they likely would have returned to full health without any action.

- Athletes on the cover of Sports Illustrated seem to do much worse after being featured. This is explained as the athlete buckling under the pressure of being put under the spotlight. In reality, this could simply be because the athlete had had an outlier year (which got them the attention to get on the cover) and has now reverted to the mean.

- In dating, there is an idea that intelligent women tend to marry men who are less intelligent than they are. The reasons given are that smart men are threatened by intelligence, or that smart women want to be dominant. The statistical explanation is that intelligent women are a minority of women (by definition of the bell curve), and so when an intelligent woman marries a man, the man is simply statistically more likely to be less intelligent than the woman.

- The majority of mutual funds do not deliver returns better than the market, after deducting fees. However, individually, they may do well one year or another and thus have the appearance of shrewd financial insight.

- Publications like Fortune often release a list of “Most Admired Companies.” Over time, they find that the firms with the worst ratings earn much higher stock returns than the most admired firms. The causal explanation given is that the top firms rest on their laurels, while the worst companies get inspiration from being ranked poorly. In reality, both types of firms are just reverting to the mean.

Reversion to the mean occurs when the correlation between two measures is imperfect, and so one data point cannot predict the next data point reliably. The “phenomena” above can be restated in these terms: “the correlation between year 1 and year 2 of an athlete’s career is imperfect”; “the correlation of performance of a mutual fund between year 1 and year 2 is imperfect.”

In other words, when we ignore reversion to the mean, we overestimate the correlation between the two measures. When we see an athlete with an outlier performance in one year, we expect that to continue. When it doesn’t, we come up with causal explanations rather than realizing we simply overestimated the correlation.

These causal explanations can give rise to superstitions and misleading rules (“I swear by this treatment for stomach pain.”)

Antidote to this bias: when looking at high and low performers, question what fundamental factors are actually correlated with performance. Then, based on these factors, predict which performers will continue and which will revert to the mean.

Part 2-2: Anchors

Anchoring describes the bias where you depend too heavily on an initial piece of information when making decisions.

In quantitative terms, when you are exposed to a number, then asked to estimate an unknown quantity, the initial number affects your estimate of the unknown quantity. Surprisingly, this happens even when the number has no meaningful relevance to the quantity to be estimated.

Examples of anchoring:

- Students are split into two groups. One group is asked if Gandhi died before or after age 144. The other group is asked if Gandhi died before or after age 32. Both groups are then asked to estimate what age Gandhi actually died at. The first group, who were asked about age 144, estimated a higher age of death than students who were asked about age 32, with a difference in average guesses of over 15 years.

- Students were shown a wheel of fortune game that had numbers on it. The game was rigged to show only the numbers 10 or 65. The students were then asked to estimate the % of African nations in the UN. The average estimates came to 25% and 45%, based on whether they were shown 10 or 65, respectively.

- A nonprofit requested different amounts of donations in its requests. When it requested $400, the average donation was $143; when requesting $5, the average donation was $20.

- In online auctions, the Buy Now prices serve as anchors for the final price.

- Arbitrary rationing, like supermarkets with “limit 12 per person,” makes people buy more cans, compared to when there’s no limit. (Shortform note: this might also be confounded as a signal of demand, indicating quality or scarcity.)

Note how in several examples above, the number given is not all that relevant to the question at hand. The wheel of fortune number has nothing to do with African countries in the UN; the requested donation size should have little effect on how much you personally want to donate. But it still has an effect.

Sometimes, the anchor works because you infer the number is given for a reason, and it’s a reasonable place to adjust from. But again even meaningless numbers, even dice rolls, can anchor you.

The anchoring index measures how effective the anchor is. The index is defined as: (the difference between the average guesses when exposed to two different anchors) / (the difference between the two anchors). Studies show this index can be over 50%! (A measure of 100% would mean the person in question is not only influenced by the anchor but uses the actual anchor number as their estimate; conversely, a measure of 0% would indicate the person has ignored the anchor entirely.)

- For example, one study asked Group A two questions: 1) Is the tallest redwood taller or shorter than 1,200 feet? and 2) What do you think the height of the tallest redwood is? They asked Group B the same two questions, except the anchor in the first question was 180 feet rather than 1,200 feet.

- Did the anchors in the first question affect the estimates given in answer to the second question? Yes. The Group A mean was 844 feet and the Group B mean was 282 feet. This produced an anchoring index of 55%.

Insidiously, people take pride in their supposed immunity to anchored numbers. But you really don’t have full command of your cognition.

- When you’re buying a house, real estate agents claim to be immune to listing prices when negotiating prices for you, when the opposite is true. The listing price strongly anchors agents to the bid that they make.

(Shortform note: the idea of anchoring can be taken beyond numbers into ideas. If someone tells you an extremely outrageous idea, then later gives you a second idea that is less extreme, the second idea sounds less controversial than if he had presented it to you first. That’s because you’ve anchored to the first extreme idea.)

Why Anchoring Works

How does anchoring work in the brain? There are two mechanisms, based on the two systems of thinking.

System 2: You start with the exposed number as an initial guess, then adjust in one direction until you’re not confident you should adjust further. At this point you’ve reached the edge of your confidence interval, not the middle of it.

- Say you were given a piece of paper and asked to draw from the bottom up until you reached 2.5 inches. Then you were asked to draw, on a separate sheet of paper, from the top down until 2.5 inches were left. The first line would likely be shorter than the space below the second line. This is because you’re not really sure what 2.5 inches looks like. If you think of uncertainty as a range, you stop drawing at the bottom edge of your uncertainty, when you first lose confidence. You stop when you reach the edge of the confidence range, not the middle of it.

- You drive much faster on city streets coming off the highway than you would otherwise because your anchor is higher than when you start from, say, a speed of zero in your driveway. You adjust relative to your anchor.

- If asked about the boiling temperature of water at the top of Mount Everest, you know that the boiling point of water at sea level is 100° Celsius, but you know that can’t be the answer to the question, since the top of Mount Everest is obviously not at sea level. To estimate the answer, you use 100° Celsius as your anchor and adjust downwards.

- Evidence that System 2 is involved: People adjust less from the anchor when their mental resources are depleted and, therefore, System 2 isn’t working well.

System 1: The anchor invokes associations that influence your thinking. System 1 tries to construct a world in which the anchor is the true number.

- Thinking of Gandhi as age 144 primes associations of old age. This causes a higher estimate.

- Evidence that System 1 is involved: Asking participants whether the average temperature was higher or lower than 68°F made it easier to recognize summer words (like “beach”) in a list. Similarly, asking about 41°F made it easier to identify winter words (like “ski”).

Overcoming Anchors

In negotiations, when someone offers an outrageous anchor, don’t engage with an equally outrageous counteroffer. Instead, threaten to end the negotiation if that number is still on the table.

(Shortform suggestions:

- If you’re given one extreme number to adjust from, repeat your reasoning with an extreme value from the other direction. Adjust from there, then average your final two results.

- When estimating, first adjust from the anchor to where you feel like you should stop. Then deliberately go much further to the point that you want to dial it back. Average the two points.

- Move your estimate from the anchor to the minimal or maximal amount it could be.)

Part 2-3: Availability Bias

When trying to answer the question “what do I think about X?,” you actually tend to think about the easier but misleading questions, “what do I remember about X, and how easily do I remember it?” The more easily you remember something, the more significant you perceive what you’re remembering to be. In contrast, things that are hard to remember are lowered in significance.

More quantitatively, when trying to estimate the size of a category or the frequency of an event, you instead use the heuristic: how easily do the instances come to mind? Whatever comes to your mind more easily is weighted as more important or true. This is the availability bias.

This means a few things:

- Items that are easier to recall take on greater weight than they should.

- When estimating the size of a category, like “dangerous animals,” if it’s easy to retrieve items for a category, you’ll judge the category to be large.

- When estimating the frequency of an event, if it’s easy to think of examples, you’ll perceive the event to be more frequent.

In practice, this manifests in a number of ways:

- Events that trigger stronger emotions (like terrorist attacks) are more readily available than events that don’t (like diabetes), causing you to overestimate the importance of the more provocative events.

- More recent events are more available than past events, and are therefore judged to be more important.

- More vivid, visual examples are more available than mere words. For instance, it’s easier to remember the details of a painting than it is to remember the details of a passage of text. Consequently, we often value visual information over verbal.

- Personal experiences are more available than statistics or data.

- Famously, spouses were asked for their % contribution to household tasks. When you add both spouses’ answers, the total tends to be more than 100% - for instance, each spouse believes they contribute 70% of household tasks. Because of availability bias, one spouse primarily sees the work they had done and not their spouse’s contribution, and so each spouse believes they contributed unequally more.

- Items that are covered more in popular media take on a greater perceived importance than those that aren’t, even if the topics that aren’t covered have more practical importance.

As we’ll discuss later in the book, availability bias also tends to influence us to weigh small risks as too large. Parents who are anxiously waiting for their teenage child to come home at night are obsessing over the fears that are readily available to their minds, rather than the realistic, low chance that the child is actually in danger.

Within the media, availability bias can cause a vicious cycle where something minor gets blown out of proportion:

- A minor curious event is reported. A group of people overreact to the news.

- News about the overreaction triggers more attention and coverage of the event. Since media companies make money from reporting worrying news, they hop on the bandwagon and make it an item of constant news coverage.

- This continues snowballing as increasingly more people see this as a crisis.

- Naysayers who say the event is not a big deal are rejected as participating in a coverup.

- Eventually, all of this can affect real policy, where scarce resources are used to solve an overreaction rather than a quantitatively more important problem.

Kahneman cites the example of New York’s Love Canal in the 1970s, where buried toxic waste polluted a water well. Residents were outraged, and the media seized on the story, claiming it was a disaster. Eventually legislation was passed that mandated the expensive cleanup of toxic sites. Kahneman argues that the pollution has not been shown to have any actual health effects, and the money could have been spent on far more worthwhile causes to save more lives.

He also points to terrorism as today’s example of an issue that is reported widely by the media. As a result, terrorism is more available in our minds than the actual danger it presents, where a very small fraction of the population dies from terrorist attacks.

Kahneman is sympathetic to the biases, however—he notes that even irrational fear is debilitating, and policymakers need to protect the public from fear, not just real dangers.

Running Out of Availability Makes You Less Confident

A series of experiments asked people to list a number of instances of a situation (such as 6 examples of when they were assertive). Then they were asked to answer a question (such as “evaluate how assertive you are”).

Question: what has a greater effect on a person’s perception of how assertive they are—the number of examples they can come up with, or the ease of recall? In other words, does someone who comes up with 10 examples of when they are assertive feel more confident than someone who comes up with 3?

You might think more examples would strengthen conviction, but being forced to think of more examples actually lowers your confidence. When people are asked to name 6 examples of their assertiveness, they feel more assertive than those asked to name 12 examples. The difficulty of scraping up the last few examples dampens one’s confidence.